-Assign different roles to GPTs to form a collaborative software entity for complex tasks.

+Assign different roles to GPTs to form a collaborative entity for complex tasks.

-

+

@@ -25,48 +25,51 @@ # MetaGPT: The Multi-Agent Framework

+## News

+🚀 Jan. 16, 2024: Our paper [MetaGPT: Meta Programming for A Multi-Agent Collaborative Framework

+](https://arxiv.org/abs/2308.00352) accepted for oral presentation **(top 1.2%)** at ICLR 2024, **ranking #1** in the LLM-based Agent category.

+

+🚀 Jan. 03, 2024: [v0.6.0](https://github.com/geekan/MetaGPT/releases/tag/v0.6.0) released, new features include serialization, upgraded OpenAI package and supported multiple LLM, provided [minimal example for debate](https://github.com/geekan/MetaGPT/blob/main/examples/debate_simple.py) etc.

+

+🚀 Dec. 15, 2023: [v0.5.0](https://github.com/geekan/MetaGPT/releases/tag/v0.5.0) released, introducing some experimental features such as **incremental development**, **multilingual**, **multiple programming languages**, etc.

+

+🔥 Nov. 08, 2023: MetaGPT is selected into [Open100: Top 100 Open Source achievements](https://www.benchcouncil.org/evaluation/opencs/annual.html).

+

+🔥 Sep. 01, 2023: MetaGPT tops GitHub Trending Monthly for the **17th time** in August 2023.

+

+🌟 Jun. 30, 2023: MetaGPT is now open source.

+

+🌟 Apr. 24, 2023: First line of MetaGPT code committed.

+

+## Software Company as Multi-Agent System

+

1. MetaGPT takes a **one line requirement** as input and outputs **user stories / competitive analysis / requirements / data structures / APIs / documents, etc.**

2. Internally, MetaGPT includes **product managers / architects / project managers / engineers.** It provides the entire process of a **software company along with carefully orchestrated SOPs.**

1. `Code = SOP(Team)` is the core philosophy. We materialize SOP and apply it to teams composed of LLMs.

-

Software Company Multi-Role Schematic (Gradually Implementing)

-

-## News

-🚀 Jan 03: Here comes [v0.6.0](https://github.com/geekan/MetaGPT/releases/tag/v0.6.0)! In this version, we added serialization and deserialization of important objects and enabled breakpoint recovery. We upgraded OpenAI package to v1.6.0 and supported Gemini, ZhipuAI, Ollama, OpenLLM, etc. Moreover, we provided extremely simple examples where you need only 7 lines to implement a general election [debate](https://github.com/geekan/MetaGPT/blob/main/examples/debate_simple.py). Check out more details [here](https://github.com/geekan/MetaGPT/releases/tag/v0.6.0)!

-

-

-🚀 Dec 15: [v0.5.0](https://github.com/geekan/MetaGPT/releases/tag/v0.5.0) is released! We introduced **incremental development**, facilitating agents to build up larger projects on top of their previous efforts or existing codebase. We also launched a whole collection of important features, including **multilingual support** (experimental), multiple **programming languages support** (experimental), **incremental development** (experimental), CLI support, pip support, enhanced code review, documentation mechanism, and optimized messaging mechanism!

+

Software Company Multi-Agent Schematic (Gradually Implementing)

## Install

### Pip installation

+> Ensure that Python 3.9+ is installed on your system. You can check this by using: `python --version`.

+> You can use conda like this: `conda create -n metagpt python=3.9 && conda activate metagpt`

+

```bash

-# Step 1: Ensure that Python 3.9+ is installed on your system. You can check this by using:

-# You can use conda to initialize a new python env

-# conda create -n metagpt python=3.9

-# conda activate metagpt

-python3 --version

+pip install metagpt

+metagpt --init-config # create ~/.metagpt/config2.yaml, modify it to your own config

+metagpt "Create a 2048 game" # this will create a repo in ./workspace

+```

-# Step 2: Clone the repository to your local machine for latest version, and install it.

-git clone https://github.com/geekan/MetaGPT.git

-cd MetaGPT

-pip3 install -e . # or pip3 install metagpt # for stable version

+or you can use it as library

-# Step 3: setup your OPENAI_API_KEY, or make sure it existed in the env

-mkdir ~/.metagpt

-cp config/config.yaml ~/.metagpt/config.yaml

-vim ~/.metagpt/config.yaml

-

-# Step 4: run metagpt cli

-metagpt "Create a 2048 game in python"

-

-# Step 5 [Optional]: If you want to save the artifacts like diagrams such as quadrant chart, system designs, sequence flow in the workspace, you can execute the step before Step 3. By default, the framework is compatible, and the entire process can be run completely without executing this step.

-# If executing, ensure that NPM is installed on your system. Then install mermaid-js. (If you don't have npm in your computer, please go to the Node.js official website to install Node.js https://nodejs.org/ and then you will have npm tool in your computer.)

-npm --version

-sudo npm install -g @mermaid-js/mermaid-cli

+```python

+from metagpt.software_company import generate_repo, ProjectRepo

+repo: ProjectRepo = generate_repo("Create a 2048 game") # or ProjectRepo("")

+print(repo) # it will print the repo structure with files

```

detail installation please refer to [cli_install](https://docs.deepwisdom.ai/main/en/guide/get_started/installation.html#install-stable-version)

@@ -75,19 +78,19 @@ ### Docker installation

> Note: In the Windows, you need to replace "/opt/metagpt" with a directory that Docker has permission to create, such as "D:\Users\x\metagpt"

```bash

-# Step 1: Download metagpt official image and prepare config.yaml

+# Step 1: Download metagpt official image and prepare config2.yaml

docker pull metagpt/metagpt:latest

mkdir -p /opt/metagpt/{config,workspace}

-docker run --rm metagpt/metagpt:latest cat /app/metagpt/config/config.yaml > /opt/metagpt/config/key.yaml

-vim /opt/metagpt/config/key.yaml # Change the config

+docker run --rm metagpt/metagpt:latest cat /app/metagpt/config/config2.yaml > /opt/metagpt/config/config2.yaml

+vim /opt/metagpt/config/config2.yaml # Change the config

# Step 2: Run metagpt demo with container

docker run --rm \

--privileged \

- -v /opt/metagpt/config/key.yaml:/app/metagpt/config/key.yaml \

+ -v /opt/metagpt/config/config2.yaml:/app/metagpt/config/config2.yaml \

-v /opt/metagpt/workspace:/app/metagpt/workspace \

metagpt/metagpt:latest \

- metagpt "Write a cli snake game"

+ metagpt "Create a 2048 game"

```

detail installation please refer to [docker_install](https://docs.deepwisdom.ai/main/en/guide/get_started/installation.html#install-with-docker)

diff --git a/config/config2.yaml b/config/config2.yaml

index 5e7f34809..2c4ca636f 100644

--- a/config/config2.yaml

+++ b/config/config2.yaml

@@ -1,3 +1,3 @@

llm:

api_key: "YOUR_API_KEY"

- model: "gpt-3.5-turbo-1106"

\ No newline at end of file

+ model: "gpt-4-turbo-preview" # or gpt-3.5-turbo-1106 / gpt-4-1106-preview

\ No newline at end of file

diff --git a/config/config2.yaml.example b/config/config2.yaml.example

index 35575e5a5..2217f1b2c 100644

--- a/config/config2.yaml.example

+++ b/config/config2.yaml.example

@@ -1,8 +1,8 @@

llm:

- api_type: "openai"

+ api_type: "openai" # or azure / ollama etc.

base_url: "YOUR_BASE_URL"

api_key: "YOUR_API_KEY"

- model: "gpt-3.5-turbo-1106" # or gpt-4-1106-preview

+ model: "gpt-4-turbo-preview" # or gpt-3.5-turbo-1106 / gpt-4-1106-preview

proxy: "YOUR_PROXY"

@@ -11,6 +11,10 @@ search:

api_key: "YOUR_API_KEY"

cse_id: "YOUR_CSE_ID"

+browser:

+ engine: "playwright" # playwright/selenium

+ browser_type: "chromium" # playwright: chromium/firefox/webkit; selenium: chrome/firefox/edge/ie

+

mermaid:

engine: "pyppeteer"

path: "/Applications/Google Chrome.app"

@@ -29,14 +33,13 @@ s3:

bucket: "test"

-AZURE_TTS_SUBSCRIPTION_KEY: "YOUR_SUBSCRIPTION_KEY"

-AZURE_TTS_REGION: "eastus"

+azure_tts_subscription_key: "YOUR_SUBSCRIPTION_KEY"

+azure_tts_region: "eastus"

-IFLYTEK_APP_ID: "YOUR_APP_ID"

-IFLYTEK_API_KEY: "YOUR_API_KEY"

-IFLYTEK_API_SECRET: "YOUR_API_SECRET"

+iflytek_api_id: "YOUR_APP_ID"

+iflytek_api_key: "YOUR_API_KEY"

+iflytek_api_secret: "YOUR_API_SECRET"

-METAGPT_TEXT_TO_IMAGE_MODEL_URL: "YOUR_MODEL_URL"

-

-PYPPETEER_EXECUTABLE_PATH: "/Applications/Google Chrome.app"

+metagpt_tti_url: "YOUR_MODEL_URL"

+repair_llm_output: true

diff --git a/docs/.well-known/metagpt_oas3_api.yaml b/docs/.well-known/metagpt_oas3_api.yaml

index 0a702e8b6..1f370b62d 100644

--- a/docs/.well-known/metagpt_oas3_api.yaml

+++ b/docs/.well-known/metagpt_oas3_api.yaml

@@ -14,16 +14,16 @@ paths:

/tts/azsure:

x-prerequisite:

configurations:

- AZURE_TTS_SUBSCRIPTION_KEY:

+ azure_tts_subscription_key:

type: string

description: "For more details, check out: [Azure Text-to_Speech](https://learn.microsoft.com/en-us/azure/ai-services/speech-service/language-support?tabs=tts)"

- AZURE_TTS_REGION:

+ azure_tts_region:

type: string

description: "For more details, check out: [Azure Text-to_Speech](https://learn.microsoft.com/en-us/azure/ai-services/speech-service/language-support?tabs=tts)"

required:

allOf:

- - AZURE_TTS_SUBSCRIPTION_KEY

- - AZURE_TTS_REGION

+ - azure_tts_subscription_key

+ - azure_tts_region

post:

summary: "Convert Text to Base64-encoded .wav File Stream"

description: "For more details, check out: [Azure Text-to_Speech](https://learn.microsoft.com/en-us/azure/ai-services/speech-service/language-support?tabs=tts)"

@@ -94,9 +94,9 @@ paths:

description: "WebAPI argument, see: `https://console.xfyun.cn/services/tts`"

required:

allOf:

- - IFLYTEK_APP_ID

- - IFLYTEK_API_KEY

- - IFLYTEK_API_SECRET

+ - iflytek_app_id

+ - iflytek_api_key

+ - iflytek_api_secret

post:

summary: "Convert Text to Base64-encoded .mp3 File Stream"

description: "For more details, check out: [iFlyTek](https://console.xfyun.cn/services/tts)"

@@ -242,12 +242,12 @@ paths:

/txt2image/metagpt:

x-prerequisite:

configurations:

- METAGPT_TEXT_TO_IMAGE_MODEL_URL:

+ metagpt_tti_url:

type: string

description: "Model url."

required:

allOf:

- - METAGPT_TEXT_TO_IMAGE_MODEL_URL

+ - metagpt_tti_url

post:

summary: "Text to Image"

description: "Generate an image from the provided text using the MetaGPT Text-to-Image API."

diff --git a/docs/.well-known/skills.yaml b/docs/.well-known/skills.yaml

index c19a9501e..30c215445 100644

--- a/docs/.well-known/skills.yaml

+++ b/docs/.well-known/skills.yaml

@@ -14,10 +14,10 @@ entities:

id: text_to_speech.text_to_speech

x-prerequisite:

configurations:

- AZURE_TTS_SUBSCRIPTION_KEY:

+ azure_tts_subscription_key:

type: string

description: "For more details, check out: [Azure Text-to_Speech](https://learn.microsoft.com/en-us/azure/ai-services/speech-service/language-support?tabs=tts)"

- AZURE_TTS_REGION:

+ azure_tts_region:

type: string

description: "For more details, check out: [Azure Text-to_Speech](https://learn.microsoft.com/en-us/azure/ai-services/speech-service/language-support?tabs=tts)"

IFLYTEK_APP_ID:

@@ -32,12 +32,12 @@ entities:

required:

oneOf:

- allOf:

- - AZURE_TTS_SUBSCRIPTION_KEY

- - AZURE_TTS_REGION

+ - azure_tts_subscription_key

+ - azure_tts_region

- allOf:

- - IFLYTEK_APP_ID

- - IFLYTEK_API_KEY

- - IFLYTEK_API_SECRET

+ - iflytek_app_id

+ - iflytek_api_key

+ - iflytek_api_secret

parameters:

text:

description: 'The text used for voice conversion.'

@@ -103,13 +103,13 @@ entities:

OPENAI_API_KEY:

type: string

description: "OpenAI API key, For more details, checkout: `https://platform.openai.com/account/api-keys`"

- METAGPT_TEXT_TO_IMAGE_MODEL_URL:

+ metagpt_tti_url:

type: string

description: "Model url."

required:

oneOf:

- OPENAI_API_KEY

- - METAGPT_TEXT_TO_IMAGE_MODEL_URL

+ - metagpt_tti_url

parameters:

text:

description: 'The text used for image conversion.'

diff --git a/docs/FAQ-EN.md b/docs/FAQ-EN.md

index d4a9f6097..d3caa244e 100644

--- a/docs/FAQ-EN.md

+++ b/docs/FAQ-EN.md

@@ -1,183 +1,93 @@

Our vision is to [extend human life](https://github.com/geekan/HowToLiveLonger) and [reduce working hours](https://github.com/geekan/MetaGPT/).

-1. ### Convenient Link for Sharing this Document:

+### Convenient Link for Sharing this Document:

```

-- MetaGPT-Index/FAQ https://deepwisdom.feishu.cn/wiki/MsGnwQBjiif9c3koSJNcYaoSnu4

+- MetaGPT-Index/FAQ-EN https://github.com/geekan/MetaGPT/blob/main/docs/FAQ-EN.md

+- MetaGPT-Index/FAQ-CN https://deepwisdom.feishu.cn/wiki/MsGnwQBjiif9c3koSJNcYaoSnu4

```

-2. ### Link

-

-

+### Link

1. Code:https://github.com/geekan/MetaGPT

-

-1. Roadmap:https://github.com/geekan/MetaGPT/blob/main/docs/ROADMAP.md

-

-1. EN

-

- 1. Demo Video: [MetaGPT: Multi-Agent AI Programming Framework](https://www.youtube.com/watch?v=8RNzxZBTW8M)

+2. Roadmap:https://github.com/geekan/MetaGPT/blob/main/docs/ROADMAP.md

+3. EN

+ 1. Demo Video: [MetaGPT: Multi-Agent AI Programming Framework](https://www.youtube.com/watch?v=8RNzxZBTW8M)

2. Tutorial: [MetaGPT: Deploy POWERFUL Autonomous Ai Agents BETTER Than SUPERAGI!](https://www.youtube.com/watch?v=q16Gi9pTG_M&t=659s)

3. Author's thoughts video(EN): [MetaGPT Matthew Berman](https://youtu.be/uT75J_KG_aY?si=EgbfQNAwD8F5Y1Ak)

+4. CN

+ 1. Demo Video: [MetaGPT:一行代码搭建你的虚拟公司_哔哩哔哩_bilibili](https://www.bilibili.com/video/BV1NP411C7GW/?spm_id_from=333.999.0.0&vd_source=735773c218b47da1b4bd1b98a33c5c77)

+ 1. Tutorial: [一个提示词写游戏 Flappy bird, 比AutoGPT强10倍的MetaGPT,最接近AGI的AI项目](https://youtu.be/Bp95b8yIH5c)

+ 2. Author's thoughts video(CN): [MetaGPT作者深度解析直播回放_哔哩哔哩_bilibili](https://www.bilibili.com/video/BV1Ru411V7XL/?spm_id_from=333.337.search-card.all.click)

-1. CN

-

- 1. Demo Video: [MetaGPT:一行代码搭建你的虚拟公司_哔哩哔哩_bilibili](https://www.bilibili.com/video/BV1NP411C7GW/?spm_id_from=333.999.0.0&vd_source=735773c218b47da1b4bd1b98a33c5c77)

- 1. Tutorial: [一个提示词写游戏 Flappy bird, 比AutoGPT强10倍的MetaGPT,最接近AGI的AI项目](https://youtu.be/Bp95b8yIH5c)

- 2. Author's thoughts video(CN): [MetaGPT作者深度解析直播回放_哔哩哔哩_bilibili](https://www.bilibili.com/video/BV1Ru411V7XL/?spm_id_from=333.337.search-card.all.click)

-

-

-

-3. ### How to become a contributor?

-

-

+### How to become a contributor?

1. Choose a task from the Roadmap (or you can propose one). By submitting a PR, you can become a contributor and join the dev team.

-1. Current contributors come from backgrounds including ByteDance AI Lab/DingDong/Didi/Xiaohongshu, Tencent/Baidu/MSRA/TikTok/BloomGPT Infra/Bilibili/CUHK/HKUST/CMU/UCB

+2. Current contributors come from backgrounds including ByteDance AI Lab/DingDong/Didi/Xiaohongshu, Tencent/Baidu/MSRA/TikTok/BloomGPT Infra/Bilibili/CUHK/HKUST/CMU/UCB

-

-

-4. ### Chief Evangelist (Monthly Rotation)

+### Chief Evangelist (Monthly Rotation)

MetaGPT Community - The position of Chief Evangelist rotates on a monthly basis. The primary responsibilities include:

1. Maintaining community FAQ documents, announcements, and Github resources/READMEs.

-1. Responding to, answering, and distributing community questions within an average of 30 minutes, including on platforms like Github Issues, Discord and WeChat.

-1. Upholding a community atmosphere that is enthusiastic, genuine, and friendly.

-1. Encouraging everyone to become contributors and participate in projects that are closely related to achieving AGI (Artificial General Intelligence).

-1. (Optional) Organizing small-scale events, such as hackathons.

+2. Responding to, answering, and distributing community questions within an average of 30 minutes, including on platforms like Github Issues, Discord and WeChat.

+3. Upholding a community atmosphere that is enthusiastic, genuine, and friendly.

+4. Encouraging everyone to become contributors and participate in projects that are closely related to achieving AGI (Artificial General Intelligence).

+5. (Optional) Organizing small-scale events, such as hackathons.

-

-

-5. ### FAQ

-

-

-

-1. Experience with the generated repo code:

-

- 1. https://github.com/geekan/MetaGPT/releases/tag/v0.1.0

+### FAQ

1. Code truncation/ Parsing failure:

-

- 1. Check if it's due to exceeding length. Consider using the gpt-3.5-turbo-16k or other long token versions.

-

-1. Success rate:

-

- 1. There hasn't been a quantitative analysis yet, but the success rate of code generated by GPT-4 is significantly higher than that of gpt-3.5-turbo.

-

-1. Support for incremental, differential updates (if you wish to continue a half-done task):

-

- 1. Several prerequisite tasks are listed on the ROADMAP.

-

-1. Can existing code be loaded?

-

- 1. It's not on the ROADMAP yet, but there are plans in place. It just requires some time.

-

-1. Support for multiple programming languages and natural languages?

-

- 1. It's listed on ROADMAP.

-

-1. Want to join the contributor team? How to proceed?

-

+ 1. Check if it's due to exceeding length. Consider using the gpt-4-turbo-preview or other long token versions.

+2. Success rate:

+ 1. There hasn't been a quantitative analysis yet, but the success rate of code generated by gpt-4-turbo-preview is significantly higher than that of gpt-3.5-turbo.

+3. Support for incremental, differential updates (if you wish to continue a half-done task):

+ 1. There is now an experimental version. Specify `--inc --project-path ""` or `--inc --project-name ""` on the command line and enter the corresponding requirements to try it.

+4. Can existing code be loaded?

+ 1. We are doing this, but it is very difficult, especially when the project is large, it is very difficult to achieve a high success rate.

+5. Support for multiple programming languages and natural languages?

+ 1. It is now supported, but it is still in experimental version

+6. Want to join the contributor team? How to proceed?

1. Merging a PR will get you into the contributor's team. The main ongoing tasks are all listed on the ROADMAP.

-

-1. PRD stuck / unable to access/ connection interrupted

-

- 1. The official OPENAI_BASE_URL address is `https://api.openai.com/v1`

- 1. If the official OPENAI_BASE_URL address is inaccessible in your environment (this can be verified with curl), it's recommended to configure using the reverse proxy OPENAI_BASE_URL provided by libraries such as openai-forward. For instance, `OPENAI_BASE_URL: "``https://api.openai-forward.com/v1``"`

- 1. If the official OPENAI_BASE_URL address is inaccessible in your environment (again, verifiable via curl), another option is to configure the OPENAI_PROXY parameter. This way, you can access the official OPENAI_BASE_URL via a local proxy. If you don't need to access via a proxy, please do not enable this configuration; if accessing through a proxy is required, modify it to the correct proxy address. Note that when OPENAI_PROXY is enabled, don't set OPENAI_BASE_URL.

- 1. Note: OpenAI's default API design ends with a v1. An example of the correct configuration is: `OPENAI_BASE_URL: "``https://api.openai.com/v1``"`

-

-1. Absolutely! How can I assist you today?

-

+7. PRD stuck / unable to access/ connection interrupted

+ 1. The official openai base_url address is `https://api.openai.com/v1`

+ 2. If the official openai base_url address is inaccessible in your environment (this can be verified with curl), it's recommended to configure using base_url to other "reverse-proxy" provider such as openai-forward. For instance, `openai base_url: "``https://api.openai-forward.com/v1``"`

+ 3. If the official openai base_url address is inaccessible in your environment (again, verifiable via curl), another option is to configure the llm.proxy in the `config2.yaml`. This way, you can access the official openai base_url via a local proxy. If you don't need to access via a proxy, please do not enable this configuration; if accessing through a proxy is required, modify it to the correct proxy address.

+ 4. Note: OpenAI's default API design ends with a v1. An example of the correct configuration is: `base_url: "https://api.openai.com/v1"

+8. Get reply: "Absolutely! How can I assist you today?"

1. Did you use Chi or a similar service? These services are prone to errors, and it seems that the error rate is higher when consuming 3.5k-4k tokens in GPT-4

-

-1. What does Max token mean?

-

+9. What does Max token mean?

1. It's a configuration for OpenAI's maximum response length. If the response exceeds the max token, it will be truncated.

-

-1. How to change the investment amount?

-

+10. How to change the investment amount?

1. You can view all commands by typing `metagpt --help`

-

-1. Which version of Python is more stable?

-

+11. Which version of Python is more stable?

1. python3.9 / python3.10

-

-1. Can't use GPT-4, getting the error "The model gpt-4 does not exist."

-

+12. Can't use GPT-4, getting the error "The model gpt-4 does not exist."

1. OpenAI's official requirement: You can use GPT-4 only after spending $1 on OpenAI.

1. Tip: Run some data with gpt-3.5-turbo (consume the free quota and $1), and then you should be able to use gpt-4.

-

-1. Can games whose code has never been seen before be written?

-

+13. Can games whose code has never been seen before be written?

1. Refer to the README. The recommendation system of Toutiao is one of the most complex systems in the world currently. Although it's not on GitHub, many discussions about it exist online. If it can visualize these, it suggests it can also summarize these discussions and convert them into code. The prompt would be something like "write a recommendation system similar to Toutiao". Note: this was approached in earlier versions of the software. The SOP of those versions was different; the current one adopts Elon Musk's five-step work method, emphasizing trimming down requirements as much as possible.

-

-1. Under what circumstances would there typically be errors?

-

+14. Under what circumstances would there typically be errors?

1. More than 500 lines of code: some function implementations may be left blank.

- 1. When using a database, it often gets the implementation wrong — since the SQL database initialization process is usually not in the code.

- 1. With more lines of code, there's a higher chance of false impressions, leading to calls to non-existent APIs.

-

-1. Instructions for using SD Skills/UI Role:

-

- 1. Currently, there is a test script located in /tests/metagpt/roles. The file ui_role provides the corresponding code implementation. For testing, you can refer to the test_ui in the same directory.

-

- 1. The UI role takes over from the product manager role, extending the output from the 【UI Design draft】 provided by the product manager role. The UI role has implemented the UIDesign Action. Within the run of UIDesign, it processes the respective context, and based on the set template, outputs the UI. The output from the UI role includes:

-

- 1. UI Design Description: Describes the content to be designed and the design objectives.

- 1. Selected Elements: Describes the elements in the design that need to be illustrated.

- 1. HTML Layout: Outputs the HTML code for the page.

- 1. CSS Styles (styles.css): Outputs the CSS code for the page.

-

- 1. Currently, the SD skill is a tool invoked by UIDesign. It instantiates the SDEngine, with specific code found in metagpt/tools/sd_engine.

-

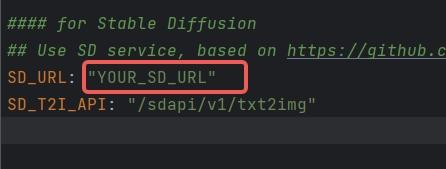

- 1. Configuration instructions for SD Skills: The SD interface is currently deployed based on *https://github.com/AUTOMATIC1111/stable-diffusion-webui* **For environmental configurations and model downloads, please refer to the aforementioned GitHub repository. To initiate the SD service that supports API calls, run the command specified in cmd with the parameter nowebui, i.e.,

-

- 1. > python3 webui.py --enable-insecure-extension-access --port xxx --no-gradio-queue --nowebui

- 1. Once it runs without errors, the interface will be accessible after approximately 1 minute when the model finishes loading.

- 1. Configure SD_URL and SD_T2I_API in the config.yaml/key.yaml files.

- 1.

- 1. SD_URL is the deployed server/machine IP, and Port is the specified port above, defaulting to 7860.

- 1. > SD_URL: IP:Port

-

-1. An error occurred during installation: "Another program is using this file...egg".

-

+ 2. When using a database, it often gets the implementation wrong — since the SQL database initialization process is usually not in the code.

+ 3. With more lines of code, there's a higher chance of false impressions, leading to calls to non-existent APIs.

+15. An error occurred during installation: "Another program is using this file...egg".

1. Delete the file and try again.

- 1. Or manually execute`pip install -r requirements.txt`

-

-1. The origin of the name MetaGPT?

-

+ 2. Or manually execute`pip install -r requirements.txt`

+16. The origin of the name MetaGPT?

1. The name was derived after iterating with GPT-4 over a dozen rounds. GPT-4 scored and suggested it.

-

-1. Is there a more step-by-step installation tutorial?

-

- 1. Youtube(CN):[一个提示词写游戏 Flappy bird, 比AutoGPT强10倍的MetaGPT,最接近AGI的AI项目=一个软件公司产品经理+程序员](https://youtu.be/Bp95b8yIH5c)

- 1. Youtube(EN)https://www.youtube.com/watch?v=q16Gi9pTG_M&t=659s

- 2. video(EN): [MetaGPT Matthew Berman](https://youtu.be/uT75J_KG_aY?si=EgbfQNAwD8F5Y1Ak)

-

-1. openai.error.RateLimitError: You exceeded your current quota, please check your plan and billing details

-

+17. openai.error.RateLimitError: You exceeded your current quota, please check your plan and billing details

1. If you haven't exhausted your free quota, set RPM to 3 or lower in the settings.

- 1. If your free quota is used up, consider adding funds to your account.

-

-1. What does "borg" mean in n_borg?

-

+ 2. If your free quota is used up, consider adding funds to your account.

+18. What does "borg" mean in n_borg?

1. [Wikipedia borg meaning ](https://en.wikipedia.org/wiki/Borg)

- 1. The Borg civilization operates based on a hive or collective mentality, known as "the Collective." Every Borg individual is connected to the collective via a sophisticated subspace network, ensuring continuous oversight and guidance for every member. This collective consciousness allows them to not only "share the same thoughts" but also to adapt swiftly to new strategies. While individual members of the collective rarely communicate, the collective "voice" sometimes transmits aboard ships.

-

-1. How to use the Claude API?

-

+ 2. The Borg civilization operates based on a hive or collective mentality, known as "the Collective." Every Borg individual is connected to the collective via a sophisticated subspace network, ensuring continuous oversight and guidance for every member. This collective consciousness allows them to not only "share the same thoughts" but also to adapt swiftly to new strategies. While individual members of the collective rarely communicate, the collective "voice" sometimes transmits aboard ships.

+19. How to use the Claude API?

1. The full implementation of the Claude API is not provided in the current code.

1. You can use the Claude API through third-party API conversion projects like: https://github.com/jtsang4/claude-to-chatgpt

-

-1. Is Llama2 supported?

-

+20. Is Llama2 supported?

1. On the day Llama2 was released, some of the community members began experiments and found that output can be generated based on MetaGPT's structure. However, Llama2's context is too short to generate a complete project. Before regularly using Llama2, it's necessary to expand the context window to at least 8k. If anyone has good recommendations for expansion models or methods, please leave a comment.

-

-1. `mermaid-cli getElementsByTagName SyntaxError: Unexpected token '.'`

-

+21. `mermaid-cli getElementsByTagName SyntaxError: Unexpected token '.'`

1. Upgrade node to version 14.x or above:

-

1. `npm install -g n`

- 1. `n stable` to install the stable version of node(v18.x)

+ 2. `n stable` to install the stable version of node(v18.x)

diff --git a/docs/README_CN.md b/docs/README_CN.md

index 2855b5500..7a0db4974 100644

--- a/docs/README_CN.md

+++ b/docs/README_CN.md

@@ -35,50 +35,45 @@ # MetaGPT: 多智能体框架

## 安装

### Pip安装

+> 确保您的系统已安装 Python 3.9 或更高版本。您可以使用以下命令来检查:`python --version`。

+> 您可以这样使用 conda:`conda create -n metagpt python=3.9 && conda activate metagpt`

+

```bash

-# 第 1 步:确保您的系统上安装了 Python 3.9+。您可以使用以下命令进行检查:

-# 可以使用conda来初始化新的python环境

-# conda create -n metagpt python=3.9

-# conda activate metagpt

-python3 --version

-

-# 第 2 步:克隆最新仓库到您的本地机器,并进行安装。

-git clone https://github.com/geekan/MetaGPT.git

-cd MetaGPT

-pip3 install -e. # 或者 pip3 install metagpt # 安装稳定版本

-

-# 第 3 步:执行metagpt

-# 拷贝config.yaml为key.yaml,并设置你自己的OPENAI_API_KEY

-metagpt "Write a cli snake game"

-

-# 第 4 步【可选的】:如果你想在执行过程中保存像象限图、系统设计、序列流程等图表这些产物,可以在第3步前执行该步骤。默认的,框架做了兼容,在不执行该步的情况下,也可以完整跑完整个流程。

-# 如果执行,确保您的系统上安装了 NPM。并使用npm安装mermaid-js

-npm --version

-sudo npm install -g @mermaid-js/mermaid-cli

+pip install metagpt

+metagpt --init-config # 创建 ~/.metagpt/config2.yaml,根据您的需求修改它

+metagpt "创建一个 2048 游戏" # 这将在 ./workspace 创建一个仓库

```

-详细的安装请安装 [cli_install](https://docs.deepwisdom.ai/guide/get_started/installation.html#install-stable-version)

+或者您可以将其作为库使用

+

+```python

+from metagpt.software_company import generate_repo, ProjectRepo

+repo: ProjectRepo = generate_repo("创建一个 2048 游戏") # 或 ProjectRepo("<路径>")

+print(repo) # 它将打印出仓库结构及其文件

+```

+

+详细的安装请参考 [cli_install](https://docs.deepwisdom.ai/guide/get_started/installation.html#install-stable-version)

### Docker安装

> 注意:在Windows中,你需要将 "/opt/metagpt" 替换为Docker具有创建权限的目录,比如"D:\Users\x\metagpt"

```bash

-# 步骤1: 下载metagpt官方镜像并准备好config.yaml

+# 步骤1: 下载metagpt官方镜像并准备好config2.yaml

docker pull metagpt/metagpt:latest

mkdir -p /opt/metagpt/{config,workspace}

-docker run --rm metagpt/metagpt:latest cat /app/metagpt/config/config.yaml > /opt/metagpt/config/key.yaml

-vim /opt/metagpt/config/key.yaml # 修改配置文件

+docker run --rm metagpt/metagpt:latest cat /app/metagpt/config/config2.yaml > /opt/metagpt/config/config2.yaml

+vim /opt/metagpt/config/config2.yaml # 修改配置文件

# 步骤2: 使用容器运行metagpt演示

docker run --rm \

--privileged \

- -v /opt/metagpt/config/key.yaml:/app/metagpt/config/key.yaml \

+ -v /opt/metagpt/config/config2.yaml:/app/metagpt/config/config2.yaml \

-v /opt/metagpt/workspace:/app/metagpt/workspace \

metagpt/metagpt:latest \

metagpt "Write a cli snake game"

```

-详细的安装请安装 [docker_install](https://docs.deepwisdom.ai/main/zh/guide/get_started/installation.html#%E4%BD%BF%E7%94%A8docker%E5%AE%89%E8%A3%85)

+详细的安装请参考 [docker_install](https://docs.deepwisdom.ai/main/zh/guide/get_started/installation.html#%E4%BD%BF%E7%94%A8docker%E5%AE%89%E8%A3%85)

### 快速开始的演示视频

- 在 [MetaGPT Huggingface Space](https://huggingface.co/spaces/deepwisdom/MetaGPT) 上进行体验

diff --git a/docs/README_JA.md b/docs/README_JA.md

index 8b2bf1fae..c6b99461c 100644

--- a/docs/README_JA.md

+++ b/docs/README_JA.md

@@ -57,24 +57,21 @@ ### インストールビデオガイド

- [Matthew Berman: How To Install MetaGPT - Build A Startup With One Prompt!!](https://youtu.be/uT75J_KG_aY)

### 伝統的なインストール

+> Python 3.9 以上がシステムにインストールされていることを確認してください。これは `python --version` を使ってチェックできます。

+> 以下のようにcondaを使うことができます:`conda create -n metagpt python=3.9 && conda activate metagpt`

```bash

-# ステップ 1: Python 3.9+ がシステムにインストールされていることを確認してください。これを確認するには:

-python3 --version

+pip install metagpt

+metagpt --init-config # ~/.metagpt/config2.yaml を作成し、自分の設定に合わせて変更してください

+metagpt "2048ゲームを作成する" # これにより ./workspace にリポジトリが作成されます

+```

-# ステップ 2: リポジトリをローカルマシンにクローンし、インストールする。

-git clone https://github.com/geekan/MetaGPT.git

-cd MetaGPT

-pip install -e.

+または、ライブラリとして使用することもできます

-# ステップ 3: metagpt を実行する

-# config.yaml を key.yaml にコピーし、独自の OPENAI_API_KEY を設定します

-metagpt "Write a cli snake game"

-

-# ステップ 4 [オプション]: 実行中に PRD ファイルなどのアーティファクトを保存する場合は、ステップ 3 の前にこのステップを実行できます。デフォルトでは、フレームワークには互換性があり、この手順を実行しなくてもプロセス全体を完了できます。

-# NPM がシステムにインストールされていることを確認してください。次に mermaid-js をインストールします。(お使いのコンピューターに npm がない場合は、Node.js 公式サイトで Node.js https://nodejs.org/ をインストールしてください。)

-npm --version

-sudo npm install -g @mermaid-js/mermaid-cli

+```python

+from metagpt.software_company import generate_repo, ProjectRepo

+repo: ProjectRepo = generate_repo("2048ゲームを作成する") # または ProjectRepo("<パス>")

+print(repo) # リポジトリの構造とファイルを出力します

```

**注:**

@@ -91,8 +88,8 @@ # NPM がシステムにインストールされていることを確認して

- config.yml に mmdc のコンフィグを記述するのを忘れないこと

```yml

- PUPPETEER_CONFIG: "./config/puppeteer-config.json"

- MMDC: "./node_modules/.bin/mmdc"

+ puppeteer_config: "./config/puppeteer-config.json"

+ path: "./node_modules/.bin/mmdc"

```

- もし `pip install -e.` がエラー `[Errno 13] Permission denied: '/usr/local/lib/python3.11/dist-packages/test-easy-install-13129.write-test'` で失敗したら、代わりに `pip install -e. --user` を実行してみてください

@@ -114,12 +111,13 @@ # NPM がシステムにインストールされていることを確認して

playwright install --with-deps chromium

```

- - **modify `config.yaml`**

+ - **modify `config2.yaml`**

- config.yaml から MERMAID_ENGINE のコメントを外し、`playwright` に変更する

+ config2.yaml から mermaid.engine のコメントを外し、`playwright` に変更する

```yaml

- MERMAID_ENGINE: playwright

+ mermaid:

+ engine: playwright

```

- pyppeteer

@@ -143,21 +141,23 @@ # NPM がシステムにインストールされていることを確認して

pyppeteer-install

```

- - **`config.yaml` を修正**

+ - **`config2.yaml` を修正**

- config.yaml から MERMAID_ENGINE のコメントを外し、`pyppeteer` に変更する

+ config2.yaml から mermaid.engine のコメントを外し、`pyppeteer` に変更する

```yaml

- MERMAID_ENGINE: pyppeteer

+ mermaid:

+ engine: pyppeteer

```

- mermaid.ink

- - **`config.yaml` を修正**

+ - **`config2.yaml` を修正**

- config.yaml から MERMAID_ENGINE のコメントを外し、`ink` に変更する

+ config2.yaml から mermaid.engine のコメントを外し、`ink` に変更する

```yaml

- MERMAID_ENGINE: ink

+ mermaid:

+ engine: ink

```

注: この方法は pdf エクスポートに対応していません。

@@ -166,16 +166,16 @@ ### Docker によるインストール

> Windowsでは、"/opt/metagpt"をDockerが作成する権限を持つディレクトリに置き換える必要があります。例えば、"D:\Users\x\metagpt"などです。

```bash

-# ステップ 1: metagpt 公式イメージをダウンロードし、config.yaml を準備する

+# ステップ 1: metagpt 公式イメージをダウンロードし、config2.yaml を準備する

docker pull metagpt/metagpt:latest

mkdir -p /opt/metagpt/{config,workspace}

-docker run --rm metagpt/metagpt:latest cat /app/metagpt/config/config.yaml > /opt/metagpt/config/key.yaml

-vim /opt/metagpt/config/key.yaml # 設定を変更する

+docker run --rm metagpt/metagpt:latest cat /app/metagpt/config/config2.yaml > /opt/metagpt/config/config2.yaml

+vim /opt/metagpt/config/config2.yaml # 設定を変更する

# ステップ 2: コンテナで metagpt デモを実行する

docker run --rm \

--privileged \

- -v /opt/metagpt/config/key.yaml:/app/metagpt/config/key.yaml \

+ -v /opt/metagpt/config/config2.yaml:/app/metagpt/config/config2.yaml \

-v /opt/metagpt/workspace:/app/metagpt/workspace \

metagpt/metagpt:latest \

metagpt "Write a cli snake game"

@@ -183,7 +183,7 @@ # ステップ 2: コンテナで metagpt デモを実行する

# コンテナを起動し、その中でコマンドを実行することもできます

docker run --name metagpt -d \

--privileged \

- -v /opt/metagpt/config/key.yaml:/app/metagpt/config/key.yaml \

+ -v /opt/metagpt/config/config2.yaml:/app/metagpt/config/config2.yaml \

-v /opt/metagpt/workspace:/app/metagpt/workspace \

metagpt/metagpt:latest

@@ -194,7 +194,7 @@ # コンテナを起動し、その中でコマンドを実行することもで

コマンド `docker run ...` は以下のことを行います:

- 特権モードで実行し、ブラウザの実行権限を得る

-- ホスト設定ファイル `/opt/metagpt/config/key.yaml` をコンテナ `/app/metagpt/config/key.yaml` にマップします

+- ホスト設定ファイル `/opt/metagpt/config/config2.yaml` をコンテナ `/app/metagpt/config/config2.yaml` にマップします

- ホストディレクトリ `/opt/metagpt/workspace` をコンテナディレクトリ `/app/metagpt/workspace` にマップするs

- デモコマンド `metagpt "Write a cli snake game"` を実行する

@@ -208,19 +208,14 @@ # また、自分で metagpt イメージを構築することもできます。

## 設定

-- `OPENAI_API_KEY` を `config/key.yaml / config/config.yaml / env` のいずれかで設定します。

-- 優先順位は: `config/key.yaml > config/config.yaml > env` の順です。

+- `api_key` を `~/.metagpt/config2.yaml / config/config2.yaml` のいずれかで設定します。

+- 優先順位は: `~/.metagpt/config2.yaml > config/config2.yaml > env` の順です。

```bash

# 設定ファイルをコピーし、必要な修正を加える。

-cp config/config.yaml config/key.yaml

+cp config/config2.yaml ~/.metagpt/config2.yaml

```

-| 変数名 | config/key.yaml | env |

-| --------------------------------------- | ----------------------------------------- | ----------------------------------------------- |

-| OPENAI_API_KEY # 自分のキーに置き換える | OPENAI_API_KEY: "sk-..." | export OPENAI_API_KEY="sk-..." |

-| OPENAI_BASE_URL # オプション | OPENAI_BASE_URL: "https:///v1" | export OPENAI_BASE_URL="https:///v1" |

-

## チュートリアル: スタートアップの開始

```shell

diff --git a/docs/ROADMAP.md b/docs/ROADMAP.md

index 9bc62f849..4bb530bf2 100644

--- a/docs/ROADMAP.md

+++ b/docs/ROADMAP.md

@@ -76,9 +76,8 @@ ### Tasks

2. ~~Support Azure asynchronous API~~

3. Support streaming version of all APIs

4. ~~Make gpt-3.5-turbo available (HARD)~~

- 5. Support

10. Other

1. ~~Clean up existing unused code~~

- 2. Unify all code styles and establish contribution standards

+ 2. ~~Unify all code styles and establish contribution standards~~

3. ~~Multi-language support~~

4. ~~Multi-programming-language support~~

diff --git a/docs/install/cli_install.md b/docs/install/cli_install.md

index 80deda771..b79ad9cb7 100644

--- a/docs/install/cli_install.md

+++ b/docs/install/cli_install.md

@@ -9,17 +9,29 @@ ### Support System and version

### Detail Installation

```bash

-# Step 1: Ensure that NPM is installed on your system. Then install mermaid-js. (If you don't have npm in your computer, please go to the Node.js official website to install Node.js https://nodejs.org/ and then you will have npm tool in your computer.)

-npm --version

-sudo npm install -g @mermaid-js/mermaid-cli

-

-# Step 2: Ensure that Python 3.9+ is installed on your system. You can check this by using:

+# Step 1: Ensure that Python 3.9+ is installed on your system. You can check this by using:

+# You can use conda to initialize a new python env

+# conda create -n metagpt python=3.9

+# conda activate metagpt

python3 --version

-# Step 3: Clone the repository to your local machine, and install it.

+# Step 2: Clone the repository to your local machine for latest version, and install it.

git clone https://github.com/geekan/MetaGPT.git

cd MetaGPT

-pip install -e.

+pip3 install -e . # or pip3 install metagpt # for stable version

+

+# Step 3: setup your LLM key in the config2.yaml file

+mkdir ~/.metagpt

+cp config/config2.yaml ~/.metagpt/config2.yaml

+vim ~/.metagpt/config2.yaml

+

+# Step 4: run metagpt cli

+metagpt "Create a 2048 game in python"

+

+# Step 5 [Optional]: If you want to save the artifacts like diagrams such as quadrant chart, system designs, sequence flow in the workspace, you can execute the step before Step 3. By default, the framework is compatible, and the entire process can be run completely without executing this step.

+# If executing, ensure that NPM is installed on your system. Then install mermaid-js. (If you don't have npm in your computer, please go to the Node.js official website to install Node.js https://nodejs.org/ and then you will have npm tool in your computer.)

+npm --version

+sudo npm install -g @mermaid-js/mermaid-cli

```

**Note:**

@@ -33,11 +45,12 @@ # Step 3: Clone the repository to your local machine, and install it.

npm install @mermaid-js/mermaid-cli

```

-- don't forget to the configuration for mmdc in config.yml

+- don't forget to the configuration for mmdc path in config.yml

- ```yml

- PUPPETEER_CONFIG: "./config/puppeteer-config.json"

- MMDC: "./node_modules/.bin/mmdc"

+ ```yaml

+ mermaid:

+ puppeteer_config: "./config/puppeteer-config.json"

+ path: "./node_modules/.bin/mmdc"

```

- if `pip install -e.` fails with error `[Errno 13] Permission denied: '/usr/local/lib/python3.11/dist-packages/test-easy-install-13129.write-test'`, try instead running `pip install -e. --user`

@@ -59,12 +72,13 @@ # Step 3: Clone the repository to your local machine, and install it.

playwright install --with-deps chromium

```

- - **modify `config.yaml`**

+ - **modify `config2.yaml`**

- uncomment MERMAID_ENGINE from config.yaml and change it to `playwright`

+ change mermaid.engine to `playwright`

```yaml

- MERMAID_ENGINE: playwright

+ mermaid:

+ engine: playwright

```

- pyppeteer

@@ -88,22 +102,24 @@ # Step 3: Clone the repository to your local machine, and install it.

pyppeteer-install

```

- - **modify `config.yaml`**

+ - **modify `config2.yaml`**

- uncomment MERMAID_ENGINE from config.yaml and change it to `pyppeteer`

+ change mermaid.engine to `pyppeteer`

```yaml

- MERMAID_ENGINE: pyppeteer

+ mermaid:

+ engine: pyppeteer

```

- mermaid.ink

- - **modify `config.yaml`**

-

- uncomment MERMAID_ENGINE from config.yaml and change it to `ink`

+ - **modify `config2.yaml`**

+

+ change mermaid.engine to `ink`

```yaml

- MERMAID_ENGINE: ink

+ mermaid:

+ engine: ink

```

Note: this method does not support pdf export.

-

\ No newline at end of file

+

diff --git a/docs/install/cli_install_cn.md b/docs/install/cli_install_cn.md

index b1da1b813..1ee18d9a6 100644

--- a/docs/install/cli_install_cn.md

+++ b/docs/install/cli_install_cn.md

@@ -10,17 +10,29 @@ ### 支持的系统和版本

### 详细安装

```bash

-# 第 1 步:确保您的系统上安装了 NPM。并使用npm安装mermaid-js

-npm --version

-sudo npm install -g @mermaid-js/mermaid-cli

-

-# 第 2 步:确保您的系统上安装了 Python 3.9+。您可以使用以下命令进行检查:

+# 步骤 1: 确保您的系统安装了 Python 3.9 或更高版本。您可以使用以下命令来检查:

+# 您可以使用 conda 来初始化一个新的 Python 环境

+# conda create -n metagpt python=3.9

+# conda activate metagpt

python3 --version

-# 第 3 步:克隆仓库到您的本地机器,并进行安装。

+# 步骤 2: 克隆仓库到您的本地机器以获取最新版本,并安装它。

git clone https://github.com/geekan/MetaGPT.git

cd MetaGPT

-pip install -e.

+pip3 install -e . # 或 pip3 install metagpt # 用于稳定版本

+

+# 步骤 3: 在 config2.yaml 文件中设置您的 LLM 密钥

+mkdir ~/.metagpt

+cp config/config2.yaml ~/.metagpt/config2.yaml

+vim ~/.metagpt/config2.yaml

+

+# 步骤 4: 运行 metagpt 命令行界面

+metagpt "用 python 创建一个 2048 游戏"

+

+# 步骤 5 [可选]: 如果您想保存诸如象限图、系统设计、序列流等图表作为工作空间的工件,您可以在执行步骤 3 之前执行此步骤。默认情况下,该框架是兼容的,整个过程可以完全不执行此步骤而运行。

+# 如果执行此步骤,请确保您的系统上安装了 NPM。然后安装 mermaid-js。(如果您的计算机中没有 npm,请访问 Node.js 官方网站 https://nodejs.org/ 安装 Node.js,然后您将在计算机中拥有 npm 工具。)

+npm --version

+sudo npm install -g @mermaid-js/mermaid-cli

```

**注意:**

@@ -33,11 +45,12 @@ # 第 3 步:克隆仓库到您的本地机器,并进行安装。

npm install @mermaid-js/mermaid-cli

```

-- 不要忘记在config.yml中为mmdc配置配置,

+- 不要忘记在config.yml中为mmdc配置

```yml

- PUPPETEER_CONFIG: "./config/puppeteer-config.json"

- MMDC: "./node_modules/.bin/mmdc"

+ mermaid:

+ puppeteer_config: "./config/puppeteer-config.json"

+ path: "./node_modules/.bin/mmdc"

```

- 如果`pip install -e.`失败并显示错误`[Errno 13] Permission denied: '/usr/local/lib/python3.11/dist-packages/test-easy-install-13129.write-test'`,请尝试使用`pip install -e. --user`运行。

diff --git a/docs/install/docker_install.md b/docs/install/docker_install.md

index 37125bdbe..2fe1b6abf 100644

--- a/docs/install/docker_install.md

+++ b/docs/install/docker_install.md

@@ -3,16 +3,16 @@ ## Docker Installation

### Use default MetaGPT image

```bash

-# Step 1: Download metagpt official image and prepare config.yaml

+# Step 1: Download metagpt official image and prepare config2.yaml

docker pull metagpt/metagpt:latest

mkdir -p /opt/metagpt/{config,workspace}

-docker run --rm metagpt/metagpt:latest cat /app/metagpt/config/config.yaml > /opt/metagpt/config/key.yaml

-vim /opt/metagpt/config/key.yaml # Change the config

+docker run --rm metagpt/metagpt:latest cat /app/metagpt/config/config2.yaml > /opt/metagpt/config/config2.yaml

+vim /opt/metagpt/config/config2.yaml # Change the config

# Step 2: Run metagpt demo with container

docker run --rm \

--privileged \

- -v /opt/metagpt/config/key.yaml:/app/metagpt/config/key.yaml \

+ -v /opt/metagpt/config/config2.yaml:/app/metagpt/config/config2.yaml \

-v /opt/metagpt/workspace:/app/metagpt/workspace \

metagpt/metagpt:latest \

metagpt "Write a cli snake game"

@@ -20,7 +20,7 @@ # Step 2: Run metagpt demo with container

# You can also start a container and execute commands in it

docker run --name metagpt -d \

--privileged \

- -v /opt/metagpt/config/key.yaml:/app/metagpt/config/key.yaml \

+ -v /opt/metagpt/config/config2.yaml:/app/metagpt/config/config2.yaml \

-v /opt/metagpt/workspace:/app/metagpt/workspace \

metagpt/metagpt:latest

@@ -31,7 +31,7 @@ # You can also start a container and execute commands in it

The command `docker run ...` do the following things:

- Run in privileged mode to have permission to run the browser

-- Map host configure file `/opt/metagpt/config/key.yaml` to container `/app/metagpt/config/key.yaml`

+- Map host configure file `/opt/metagpt/config/config2.yaml` to container `/app/metagpt/config/config2.yaml`

- Map host directory `/opt/metagpt/workspace` to container `/app/metagpt/workspace`

- Execute the demo command `metagpt "Write a cli snake game"`

diff --git a/docs/install/docker_install_cn.md b/docs/install/docker_install_cn.md

index f360b49ed..10204c1e0 100644

--- a/docs/install/docker_install_cn.md

+++ b/docs/install/docker_install_cn.md

@@ -3,16 +3,16 @@ ## Docker安装

### 使用MetaGPT镜像

```bash

-# 步骤1: 下载metagpt官方镜像并准备好config.yaml

+# 步骤1: 下载metagpt官方镜像并准备好config2.yaml

docker pull metagpt/metagpt:latest

mkdir -p /opt/metagpt/{config,workspace}

-docker run --rm metagpt/metagpt:latest cat /app/metagpt/config/config.yaml > /opt/metagpt/config/key.yaml

-vim /opt/metagpt/config/key.yaml # 修改配置文件

+docker run --rm metagpt/metagpt:latest cat /app/metagpt/config/config2.yaml > /opt/metagpt/config/config2.yaml

+vim /opt/metagpt/config/config2.yaml # 修改配置文件

# 步骤2: 使用容器运行metagpt演示

docker run --rm \

--privileged \

- -v /opt/metagpt/config/key.yaml:/app/metagpt/config/key.yaml \

+ -v /opt/metagpt/config/config2.yaml:/app/metagpt/config/config2.yaml \

-v /opt/metagpt/workspace:/app/metagpt/workspace \

metagpt/metagpt:latest \

metagpt "Write a cli snake game"

@@ -20,7 +20,7 @@ # 步骤2: 使用容器运行metagpt演示

# 您也可以启动一个容器并在其中执行命令

docker run --name metagpt -d \

--privileged \

- -v /opt/metagpt/config/key.yaml:/app/metagpt/config/key.yaml \

+ -v /opt/metagpt/config/config2.yaml:/app/metagpt/config/config2.yaml \

-v /opt/metagpt/workspace:/app/metagpt/workspace \

metagpt/metagpt:latest

@@ -31,7 +31,7 @@ # 您也可以启动一个容器并在其中执行命令

`docker run ...`做了以下事情:

- 以特权模式运行,有权限运行浏览器

-- 将主机文件 `/opt/metagpt/config/key.yaml` 映射到容器文件 `/app/metagpt/config/key.yaml`

+- 将主机文件 `/opt/metagpt/config/config2.yaml` 映射到容器文件 `/app/metagpt/config/config2.yaml`

- 将主机目录 `/opt/metagpt/workspace` 映射到容器目录 `/app/metagpt/workspace`

- 执行示例命令 `metagpt "Write a cli snake game"`

diff --git a/docs/tutorial/usage.md b/docs/tutorial/usage.md

index a08d92a22..1128e98a5 100644

--- a/docs/tutorial/usage.md

+++ b/docs/tutorial/usage.md

@@ -2,19 +2,14 @@ ## MetaGPT Usage

### Configuration

-- Configure your `OPENAI_API_KEY` in any of `config/key.yaml / config/config.yaml / env`

-- Priority order: `config/key.yaml > config/config.yaml > env`

+- Configure your `api_key` in any of `~/.metagpt/config2.yaml / config/config2.yaml`

+- Priority order: `~/.metagpt/config2.yaml > config/config2.yaml`

```bash

# Copy the configuration file and make the necessary modifications.

-cp config/config.yaml config/key.yaml

+cp config/config2.yaml ~/.metagpt/config2.yaml

```

-| Variable Name | config/key.yaml | env |

-| ------------------------------------------ | ----------------------------------------- | ----------------------------------------------- |

-| OPENAI_API_KEY # Replace with your own key | OPENAI_API_KEY: "sk-..." | export OPENAI_API_KEY="sk-..." |

-| OPENAI_BASE_URL # Optional | OPENAI_BASE_URL: "https:///v1" | export OPENAI_BASE_URL="https:///v1" |

-

### Initiating a startup

```shell

@@ -39,29 +34,28 @@ ### Preference of Platform or Tool

### Usage

```

-NAME

- metagpt - We are a software startup comprised of AI. By investing in us, you are empowering a future filled with limitless possibilities.

-

-SYNOPSIS

- metagpt IDEA

-

-DESCRIPTION

- We are a software startup comprised of AI. By investing in us, you are empowering a future filled with limitless possibilities.

-

-POSITIONAL ARGUMENTS

- IDEA

- Type: str

- Your innovative idea, such as "Creating a snake game."

-

-FLAGS

- --investment=INVESTMENT

- Type: float

- Default: 3.0

- As an investor, you have the opportunity to contribute a certain dollar amount to this AI company.

- --n_round=N_ROUND

- Type: int

- Default: 5

-

-NOTES

- You can also use flags syntax for POSITIONAL ARGUMENTS

+ Usage: metagpt [OPTIONS] [IDEA]

+

+ Start a new project.

+

+╭─ Arguments ────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮

+│ idea [IDEA] Your innovative idea, such as 'Create a 2048 game.' [default: None] │

+╰────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯

+╭─ Options ──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮

+│ --investment FLOAT Dollar amount to invest in the AI company. [default: 3.0] │

+│ --n-round INTEGER Number of rounds for the simulation. [default: 5] │

+│ --code-review --no-code-review Whether to use code review. [default: code-review] │

+│ --run-tests --no-run-tests Whether to enable QA for adding & running tests. [default: no-run-tests] │

+│ --implement --no-implement Enable or disable code implementation. [default: implement] │

+│ --project-name TEXT Unique project name, such as 'game_2048'. │

+│ --inc --no-inc Incremental mode. Use it to coop with existing repo. [default: no-inc] │

+│ --project-path TEXT Specify the directory path of the old version project to fulfill the incremental requirements. │

+│ --reqa-file TEXT Specify the source file name for rewriting the quality assurance code. │

+│ --max-auto-summarize-code INTEGER The maximum number of times the 'SummarizeCode' action is automatically invoked, with -1 indicating unlimited. This parameter is used for debugging the │

+│ workflow. │

+│ [default: 0] │

+│ --recover-path TEXT recover the project from existing serialized storage [default: None] │

+│ --init-config --no-init-config Initialize the configuration file for MetaGPT. [default: no-init-config] │

+│ --help Show this message and exit. │

+╰────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯

```

\ No newline at end of file

diff --git a/docs/tutorial/usage_cn.md b/docs/tutorial/usage_cn.md

index 76a5d6b1b..3b0c86279 100644

--- a/docs/tutorial/usage_cn.md

+++ b/docs/tutorial/usage_cn.md

@@ -2,19 +2,14 @@ ## MetaGPT 使用

### 配置

-- 在 `config/key.yaml / config/config.yaml / env` 中配置您的 `OPENAI_API_KEY`

-- 优先级顺序:`config/key.yaml > config/config.yaml > env`

+- 在 `~/.metagpt/config2.yaml / config/config2.yaml` 中配置您的 `api_key`

+- 优先级顺序:`~/.metagpt/config2.yaml > config/config2.yaml`

```bash

# 复制配置文件并进行必要的修改

-cp config/config.yaml config/key.yaml

+cp config/config2.yaml ~/.metagpt/config2.yaml

```

-| 变量名 | config/key.yaml | env |

-| ----------------------------------- | ----------------------------------------- | ----------------------------------------------- |

-| OPENAI_API_KEY # 用您自己的密钥替换 | OPENAI_API_KEY: "sk-..." | export OPENAI_API_KEY="sk-..." |

-| OPENAI_BASE_URL # 可选 | OPENAI_BASE_URL: "https:///v1" | export OPENAI_BASE_URL="https:///v1" |

-

### 示例:启动一个创业公司

```shell

@@ -35,29 +30,28 @@ ### 平台或工具的倾向性

### 使用

```

-名称

- metagpt - 我们是一家AI软件创业公司。通过投资我们,您将赋能一个充满无限可能的未来。

-

-概要

- metagpt IDEA

-

-描述

- 我们是一家AI软件创业公司。通过投资我们,您将赋能一个充满无限可能的未来。

-

-位置参数

- IDEA

- 类型: str

- 您的创新想法,例如"写一个命令行贪吃蛇。"

-

-标志

- --investment=INVESTMENT

- 类型: float

- 默认值: 3.0

- 作为投资者,您有机会向这家AI公司投入一定的美元金额。

- --n_round=N_ROUND

- 类型: int

- 默认值: 5

-

-备注

- 您也可以用`标志`的语法,来处理`位置参数`

+ Usage: metagpt [OPTIONS] [IDEA]

+

+ Start a new project.

+

+╭─ Arguments ────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮

+│ idea [IDEA] Your innovative idea, such as 'Create a 2048 game.' [default: None] │

+╰────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯

+╭─ Options ──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮

+│ --investment FLOAT Dollar amount to invest in the AI company. [default: 3.0] │

+│ --n-round INTEGER Number of rounds for the simulation. [default: 5] │

+│ --code-review --no-code-review Whether to use code review. [default: code-review] │

+│ --run-tests --no-run-tests Whether to enable QA for adding & running tests. [default: no-run-tests] │

+│ --implement --no-implement Enable or disable code implementation. [default: implement] │

+│ --project-name TEXT Unique project name, such as 'game_2048'. │

+│ --inc --no-inc Incremental mode. Use it to coop with existing repo. [default: no-inc] │

+│ --project-path TEXT Specify the directory path of the old version project to fulfill the incremental requirements. │

+│ --reqa-file TEXT Specify the source file name for rewriting the quality assurance code. │

+│ --max-auto-summarize-code INTEGER The maximum number of times the 'SummarizeCode' action is automatically invoked, with -1 indicating unlimited. This parameter is used for debugging the │

+│ workflow. │

+│ [default: 0] │

+│ --recover-path TEXT recover the project from existing serialized storage [default: None] │

+│ --init-config --no-init-config Initialize the configuration file for MetaGPT. [default: no-init-config] │

+│ --help Show this message and exit. │

+╰────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯

```

diff --git a/examples/crawl_webpage.py b/examples/crawl_webpage.py

new file mode 100644

index 000000000..2db9e407b

--- /dev/null

+++ b/examples/crawl_webpage.py

@@ -0,0 +1,22 @@

+# -*- encoding: utf-8 -*-

+"""

+@Date : 2024/01/24 15:11:27

+@Author : orange-crow

+@File : crawl_webpage.py

+"""

+

+from metagpt.roles.ci.code_interpreter import CodeInterpreter

+

+

+async def main():

+ prompt = """Get data from `paperlist` table in https://papercopilot.com/statistics/iclr-statistics/iclr-2024-statistics/,

+ and save it to a csv file. paper title must include `multiagent` or `large language model`. *notice: print key variables*"""

+ ci = CodeInterpreter(goal=prompt, use_tools=True)

+

+ await ci.run(prompt)

+

+

+if __name__ == "__main__":

+ import asyncio

+

+ asyncio.run(main())

diff --git a/examples/dalle_gpt4v_agent.py b/examples/dalle_gpt4v_agent.py

new file mode 100644

index 000000000..28215dba3

--- /dev/null

+++ b/examples/dalle_gpt4v_agent.py

@@ -0,0 +1,77 @@

+#!/usr/bin/env python

+# -*- coding: utf-8 -*-

+# @Desc : use gpt4v to improve prompt and draw image with dall-e-3

+

+"""set `model: "gpt-4-vision-preview"` in `config2.yaml` first"""

+

+import asyncio

+

+from PIL import Image

+

+from metagpt.actions.action import Action

+from metagpt.logs import logger

+from metagpt.roles.role import Role

+from metagpt.schema import Message

+from metagpt.utils.common import encode_image

+

+

+class GenAndImproveImageAction(Action):

+ save_image: bool = True

+

+ async def generate_image(self, prompt: str) -> Image:

+ imgs = await self.llm.gen_image(model="dall-e-3", prompt=prompt)

+ return imgs[0]

+

+ async def refine_prompt(self, old_prompt: str, image: Image) -> str:

+ msg = (

+ f"You are a creative painter, with the given generated image and old prompt: {old_prompt}, "

+ f"please refine the prompt and generate new one. Just output the new prompt."

+ )

+ b64_img = encode_image(image)

+ new_prompt = await self.llm.aask(msg=msg, images=[b64_img])

+ return new_prompt

+

+ async def evaluate_images(self, old_prompt: str, images: list[Image]) -> str:

+ msg = (

+ "With the prompt and two generated image, to judge if the second one is better than the first one. "

+ "If so, just output True else output False"

+ )

+ b64_imgs = [encode_image(img) for img in images]

+ res = await self.llm.aask(msg=msg, images=b64_imgs)

+ return res

+

+ async def run(self, messages: list[Message]) -> str:

+ prompt = messages[-1].content

+

+ old_img: Image = await self.generate_image(prompt)

+ new_prompt = await self.refine_prompt(old_prompt=prompt, image=old_img)

+ logger.info(f"original prompt: {prompt}")

+ logger.info(f"refined prompt: {new_prompt}")

+ new_img: Image = await self.generate_image(new_prompt)

+ if self.save_image:

+ old_img.save("./img_by-dall-e_old.png")

+ new_img.save("./img_by-dall-e_new.png")

+ res = await self.evaluate_images(old_prompt=prompt, images=[old_img, new_img])

+ opinion = f"The second generated image is better than the first one: {res}"

+ logger.info(f"evaluate opinion: {opinion}")

+ return opinion

+

+

+class Painter(Role):

+ name: str = "MaLiang"

+ profile: str = "Painter"

+ goal: str = "to generate fine painting"

+

+ def __init__(self, **data):

+ super().__init__(**data)

+

+ self.set_actions([GenAndImproveImageAction])

+

+

+async def main():

+ role = Painter()

+ await role.run(with_message="a girl with flowers")

+

+

+if __name__ == "__main__":

+ asyncio.run(main())

diff --git a/examples/example.faiss b/examples/example.faiss

deleted file mode 100644

index 580946190..000000000

Binary files a/examples/example.faiss and /dev/null differ

diff --git a/examples/example.pkl b/examples/example.pkl

deleted file mode 100644

index 0469a2e46..000000000

Binary files a/examples/example.pkl and /dev/null differ

diff --git a/examples/imitate_webpage.py b/examples/imitate_webpage.py

new file mode 100644

index 000000000..5075e1e39

--- /dev/null

+++ b/examples/imitate_webpage.py

@@ -0,0 +1,26 @@

+#!/usr/bin/env python

+# -*- coding: utf-8 -*-

+"""

+@Time : 2024/01/15

+@Author : mannaandpoem

+@File : imitate_webpage.py

+"""

+from metagpt.roles.ci.code_interpreter import CodeInterpreter

+

+

+async def main():

+ web_url = "https://pytorch.org/"

+ prompt = f"""This is a URL of webpage: '{web_url}' .

+Firstly, utilize Selenium and WebDriver for rendering.

+Secondly, convert image to a webpage including HTML, CSS and JS in one go.

+Finally, save webpage in a text file.

+Note: All required dependencies and environments have been fully installed and configured."""

+ ci = CodeInterpreter(goal=prompt, use_tools=True)

+

+ await ci.run(prompt)

+

+

+if __name__ == "__main__":

+ import asyncio

+

+ asyncio.run(main())

diff --git a/examples/llm_hello_world.py b/examples/llm_hello_world.py

index 76be1cc90..219a303c8 100644

--- a/examples/llm_hello_world.py

+++ b/examples/llm_hello_world.py

@@ -23,6 +23,10 @@ async def main():

# streaming mode, much slower

await llm.acompletion_text(hello_msg, stream=True)

+ # check completion if exist to test llm complete functions

+ if hasattr(llm, "completion"):

+ logger.info(llm.completion(hello_msg))

+

if __name__ == "__main__":

asyncio.run(main())

diff --git a/examples/llm_vision.py b/examples/llm_vision.py

new file mode 100644

index 000000000..276decd59

--- /dev/null

+++ b/examples/llm_vision.py

@@ -0,0 +1,23 @@

+#!/usr/bin/env python

+# -*- coding: utf-8 -*-

+# @Desc : example to run the ability of LLM vision

+

+import asyncio

+from pathlib import Path

+

+from metagpt.llm import LLM

+from metagpt.utils.common import encode_image

+

+

+async def main():

+ llm = LLM()

+

+ # check if the configured llm supports llm-vision capacity. If not, it will throw a error

+ invoice_path = Path(__file__).parent.joinpath("..", "tests", "data", "invoices", "invoice-2.png")

+ img_base64 = encode_image(invoice_path)

+ res = await llm.aask(msg="if this is a invoice, just return True else return False", images=[img_base64])

+ assert "true" in res.lower()

+

+

+if __name__ == "__main__":

+ asyncio.run(main())

diff --git a/examples/sd_tool_usage.py b/examples/sd_tool_usage.py

new file mode 100644

index 000000000..b4642af23

--- /dev/null

+++ b/examples/sd_tool_usage.py

@@ -0,0 +1,21 @@

+# -*- coding: utf-8 -*-

+# @Date : 1/11/2024 7:06 PM

+# @Author : stellahong (stellahong@fuzhi.ai)

+# @Desc :

+import asyncio

+

+from metagpt.roles.ci.code_interpreter import CodeInterpreter

+

+

+async def main(requirement: str = ""):

+ code_interpreter = CodeInterpreter(use_tools=True, goal=requirement)

+ await code_interpreter.run(requirement)

+

+

+if __name__ == "__main__":

+ sd_url = "http://your.sd.service.ip:port"

+ requirement = (

+ f"I want to generate an image of a beautiful girl using the stable diffusion text2image tool, sd_url={sd_url}"

+ )

+

+ asyncio.run(main(requirement))

diff --git a/examples/search_with_specific_engine.py b/examples/search_with_specific_engine.py

index 9406a2965..97b1378ee 100644

--- a/examples/search_with_specific_engine.py

+++ b/examples/search_with_specific_engine.py

@@ -5,17 +5,20 @@

import asyncio

from metagpt.roles import Searcher

-from metagpt.tools import SearchEngineType

+from metagpt.tools.search_engine import SearchEngine, SearchEngineType

async def main():

question = "What are the most interesting human facts?"

+ kwargs = {"api_key": "", "cse_id": "", "proxy": None}

# Serper API

- # await Searcher(engine=SearchEngineType.SERPER_GOOGLE).run(question)

+ # await Searcher(search_engine=SearchEngine(engine=SearchEngineType.SERPER_GOOGLE, **kwargs)).run(question)

# SerpAPI

- await Searcher(engine=SearchEngineType.SERPAPI_GOOGLE).run(question)

+ # await Searcher(search_engine=SearchEngine(engine=SearchEngineType.SERPAPI_GOOGLE, **kwargs)).run(question)

# Google API

- # await Searcher(engine=SearchEngineType.DIRECT_GOOGLE).run(question)

+ # await Searcher(search_engine=SearchEngine(engine=SearchEngineType.DIRECT_GOOGLE, **kwargs)).run(question)

+ # DDG API

+ await Searcher(search_engine=SearchEngine(engine=SearchEngineType.DUCK_DUCK_GO, **kwargs)).run(question)

if __name__ == "__main__":

diff --git a/examples/write_novel.py b/examples/write_novel.py

new file mode 100644

index 000000000..b272a56e6

--- /dev/null

+++ b/examples/write_novel.py

@@ -0,0 +1,49 @@

+#!/usr/bin/env python

+# -*- coding: utf-8 -*-

+"""

+@Time : 2024/2/1 12:01

+@Author : alexanderwu

+@File : write_novel.py

+"""

+import asyncio

+from typing import List

+

+from pydantic import BaseModel, Field

+

+from metagpt.actions.action_node import ActionNode

+from metagpt.llm import LLM

+

+

+class Novel(BaseModel):

+ name: str = Field(default="The Lord of the Rings", description="The name of the novel.")

+ user_group: str = Field(default="...", description="The user group of the novel.")

+ outlines: List[str] = Field(

+ default=["Chapter 1: ...", "Chapter 2: ...", "Chapter 3: ..."],

+ description="The outlines of the novel. No more than 10 chapters.",

+ )

+ background: str = Field(default="...", description="The background of the novel.")

+ character_names: List[str] = Field(default=["Frodo", "Gandalf", "Sauron"], description="The characters.")

+ conflict: str = Field(default="...", description="The conflict of the characters.")

+ plot: str = Field(default="...", description="The plot of the novel.")

+ ending: str = Field(default="...", description="The ending of the novel.")

+

+

+class Chapter(BaseModel):

+ name: str = Field(default="Chapter 1", description="The name of the chapter.")

+ content: str = Field(default="...", description="The content of the chapter. No more than 1000 words.")

+

+

+async def generate_novel():

+ instruction = (

+ "Write a novel named 'Harry Potter in The Lord of the Rings'. "

+ "Fill the empty nodes with your own ideas. Be creative! Use your own words!"

+ "I will tip you $100,000 if you write a good novel."

+ )

+ novel_node = await ActionNode.from_pydantic(Novel).fill(context=instruction, llm=LLM())

+ chap_node = await ActionNode.from_pydantic(Chapter).fill(

+ context=f"### instruction\n{instruction}\n### novel\n{novel_node.content}", llm=LLM()

+ )

+ print(chap_node.content)

+

+

+asyncio.run(generate_novel())

diff --git a/metagpt/actions/__init__.py b/metagpt/actions/__init__.py

index 5b995bab6..363b4fd33 100644

--- a/metagpt/actions/__init__.py

+++ b/metagpt/actions/__init__.py

@@ -22,6 +22,9 @@ from metagpt.actions.write_code_review import WriteCodeReview

from metagpt.actions.write_prd import WritePRD

from metagpt.actions.write_prd_review import WritePRDReview

from metagpt.actions.write_test import WriteTest

+from metagpt.actions.ci.execute_nb_code import ExecuteNbCode

+from metagpt.actions.ci.write_analysis_code import WriteCodeWithoutTools, WriteCodeWithTools

+from metagpt.actions.ci.write_plan import WritePlan

class ActionType(Enum):

@@ -42,6 +45,10 @@ class ActionType(Enum):

COLLECT_LINKS = CollectLinks

WEB_BROWSE_AND_SUMMARIZE = WebBrowseAndSummarize

CONDUCT_RESEARCH = ConductResearch

+ EXECUTE_NB_CODE = ExecuteNbCode

+ WRITE_CODE_WITHOUT_TOOLS = WriteCodeWithoutTools

+ WRITE_CODE_WITH_TOOLS = WriteCodeWithTools

+ WRITE_PLAN = WritePlan

__all__ = [

diff --git a/metagpt/actions/action.py b/metagpt/actions/action.py

index a33918a09..1b93213f7 100644

--- a/metagpt/actions/action.py

+++ b/metagpt/actions/action.py

@@ -15,6 +15,7 @@ from pydantic import BaseModel, ConfigDict, Field, model_validator

from metagpt.actions.action_node import ActionNode

from metagpt.context_mixin import ContextMixin

from metagpt.schema import (

+ CodePlanAndChangeContext,

CodeSummarizeContext,

CodingContext,

RunCodeContext,

@@ -28,14 +29,18 @@ class Action(SerializationMixin, ContextMixin, BaseModel):

model_config = ConfigDict(arbitrary_types_allowed=True)

name: str = ""

- i_context: Union[dict, CodingContext, CodeSummarizeContext, TestingContext, RunCodeContext, str, None] = ""

+ i_context: Union[

+ dict, CodingContext, CodeSummarizeContext, TestingContext, RunCodeContext, CodePlanAndChangeContext, str, None

+ ] = ""

prefix: str = "" # aask*时会加上prefix,作为system_message

desc: str = "" # for skill manager

node: ActionNode = Field(default=None, exclude=True)

@property

- def project_repo(self):

- return ProjectRepo(self.context.git_repo)

+ def repo(self) -> ProjectRepo:

+ if not self.context.repo:

+ self.context.repo = ProjectRepo(self.context.git_repo)

+ return self.context.repo

@property

def prompt_schema(self):

diff --git a/metagpt/actions/action_graph.py b/metagpt/actions/action_graph.py

new file mode 100644

index 000000000..893bc6d4c

--- /dev/null

+++ b/metagpt/actions/action_graph.py

@@ -0,0 +1,49 @@

+#!/usr/bin/env python

+# -*- coding: utf-8 -*-

+"""

+@Time : 2024/1/30 13:52

+@Author : alexanderwu

+@File : action_graph.py

+"""

+from __future__ import annotations

+

+# from metagpt.actions.action_node import ActionNode

+

+

+class ActionGraph:

+ """ActionGraph: a directed graph to represent the dependency between actions."""

+

+ def __init__(self):

+ self.nodes = {}

+ self.edges = {}

+ self.execution_order = []

+

+ def add_node(self, node):

+ """Add a node to the graph"""

+ self.nodes[node.key] = node

+

+ def add_edge(self, from_node: "ActionNode", to_node: "ActionNode"):

+ """Add an edge to the graph"""

+ if from_node.key not in self.edges:

+ self.edges[from_node.key] = []

+ self.edges[from_node.key].append(to_node.key)

+ from_node.add_next(to_node)

+ to_node.add_prev(from_node)

+

+ def topological_sort(self):

+ """Topological sort the graph"""

+ visited = set()

+ stack = []

+

+ def visit(k):

+ if k not in visited:

+ visited.add(k)

+ if k in self.edges:

+ for next_node in self.edges[k]:

+ visit(next_node)

+ stack.insert(0, k)

+

+ for key in self.nodes:

+ visit(key)

+

+ self.execution_order = stack

diff --git a/metagpt/actions/action_node.py b/metagpt/actions/action_node.py

index b4d8c32df..09da4a988 100644

--- a/metagpt/actions/action_node.py

+++ b/metagpt/actions/action_node.py

@@ -9,10 +9,11 @@ NOTE: You should use typing.List instead of list to do type annotation. Because

we can use typing to extract the type of the node, but we cannot use built-in list to extract.

"""

import json

+import typing

from enum import Enum

from typing import Any, Dict, List, Optional, Tuple, Type, Union

-from pydantic import BaseModel, create_model, model_validator

+from pydantic import BaseModel, Field, create_model, model_validator

from tenacity import retry, stop_after_attempt, wait_random_exponential

from metagpt.actions.action_outcls_registry import register_action_outcls

@@ -39,7 +40,6 @@ TAG = "CONTENT"

LANGUAGE_CONSTRAINT = "Language: Please use the same language as Human INPUT."

FORMAT_CONSTRAINT = f"Format: output wrapped inside [{TAG}][/{TAG}] like format example, nothing else."

-

SIMPLE_TEMPLATE = """

## context

{context}

@@ -61,7 +61,7 @@ Follow instructions of nodes, generate output and make sure it follows the forma

REVIEW_TEMPLATE = """

## context

-Compare the keys of nodes_output and the corresponding requirements one by one. If a key that does not match the requirement is found, provide the comment content on how to modify it. No output is required for matching keys.

+Compare the key's value of nodes_output and the corresponding requirements one by one. If a key's value that does not match the requirement is found, provide the comment content on how to modify it. No output is required for matching keys.

### nodes_output

{nodes_output}

@@ -86,7 +86,7 @@ Compare the keys of nodes_output and the corresponding requirements one by one.

{constraint}

## action

-generate output and make sure it follows the format example.

+Follow format example's {prompt_schema} format, generate output and make sure it follows the format example.

"""

REVISE_TEMPLATE = """

@@ -108,7 +108,7 @@ change the nodes_output key's value to meet its comment and no need to add extra

{constraint}

## action

-generate output and make sure it follows the format example.

+Follow format example's {prompt_schema} format, generate output and make sure it follows the format example.

"""

@@ -131,6 +131,8 @@ class ActionNode:

# Action Input

key: str # Product Requirement / File list / Code

+ func: typing.Callable # 与节点相关联的函数或LLM调用

+ params: Dict[str, Type] # 输入参数的字典,键为参数名,值为参数类型

expected_type: Type # such as str / int / float etc.

# context: str # everything in the history.

instruction: str # the instructions should be followed.

@@ -140,6 +142,10 @@ class ActionNode:

content: str

instruct_content: BaseModel

+ # For ActionGraph

+ prevs: List["ActionNode"] # previous nodes

+ nexts: List["ActionNode"] # next nodes

+

def __init__(

self,

key: str,

@@ -157,6 +163,8 @@ class ActionNode:

self.content = content

self.children = children if children is not None else {}

self.schema = schema

+ self.prevs = []

+ self.nexts = []

def __str__(self):

return (

@@ -167,6 +175,14 @@ class ActionNode:

def __repr__(self):

return self.__str__()

+ def add_prev(self, node: "ActionNode"):

+ """增加前置ActionNode"""

+ self.prevs.append(node)

+

+ def add_next(self, node: "ActionNode"):