mirror of

https://github.com/FoundationAgents/MetaGPT.git

synced 2026-05-03 04:42:38 +02:00

Merge branch 'geekan:main' into main

This commit is contained in:

commit

2b91ca3dd0

31 changed files with 1489 additions and 275 deletions

14

Dockerfile

14

Dockerfile

|

|

@ -1,10 +1,10 @@

|

|||

# Use a base image with Python3.9 and Nodejs20 slim version

|

||||

FROM nikolaik/python-nodejs:python3.9-nodejs20-slim

|

||||

|

||||

# Install Debian software needed by MetaGPT

|

||||

# Install Debian software needed by MetaGPT and clean up in one RUN command to reduce image size

|

||||

RUN apt update &&\

|

||||

apt install -y git chromium fonts-ipafont-gothic fonts-wqy-zenhei fonts-thai-tlwg fonts-kacst fonts-freefont-ttf libxss1 --no-install-recommends &&\

|

||||

apt clean

|

||||

apt clean && rm -rf /var/lib/apt/lists/*

|

||||

|

||||

# Install Mermaid CLI globally

|

||||

ENV CHROME_BIN="/usr/bin/chromium" \

|

||||

|

|

@ -15,13 +15,11 @@ RUN npm install -g @mermaid-js/mermaid-cli &&\

|

|||

|

||||

# Install Python dependencies and install MetaGPT

|

||||

COPY . /app/metagpt

|

||||

RUN cd /app/metagpt &&\

|

||||

mkdir workspace &&\

|

||||

pip install -r requirements.txt &&\

|

||||

pip cache purge &&\

|

||||

python setup.py install

|

||||

|

||||

WORKDIR /app/metagpt

|

||||

RUN mkdir workspace &&\

|

||||

pip install --no-cache-dir -r requirements.txt &&\

|

||||

python setup.py install

|

||||

|

||||

# Running with an infinite loop using the tail command

|

||||

CMD ["sh", "-c", "tail -f /dev/null"]

|

||||

|

||||

|

|

|

|||

181

MetaGPT-Index/FAQ-EN.md

Normal file

181

MetaGPT-Index/FAQ-EN.md

Normal file

|

|

@ -0,0 +1,181 @@

|

|||

Our vision is to [extend human life](https://github.com/geekan/HowToLiveLonger) and [reduce working hours](https://github.com/geekan/MetaGPT/).

|

||||

|

||||

1. ### Convenient Link for Sharing this Document:

|

||||

|

||||

```

|

||||

- MetaGPT-Index/FAQ https://deepwisdom.feishu.cn/wiki/MsGnwQBjiif9c3koSJNcYaoSnu4

|

||||

```

|

||||

|

||||

2. ### Link

|

||||

|

||||

<!---->

|

||||

|

||||

1. Code:https://github.com/geekan/MetaGPT

|

||||

|

||||

1. Roadmap:https://github.com/geekan/MetaGPT/blob/main/docs/ROADMAP.md

|

||||

|

||||

1. EN

|

||||

|

||||

1. Demo Video: [MetaGPT: Multi-Agent AI Programming Framework](https://www.youtube.com/watch?v=8RNzxZBTW8M)

|

||||

1. Tutorial: [MetaGPT: Deploy POWERFUL Autonomous Ai Agents BETTER Than SUPERAGI!](https://www.youtube.com/watch?v=q16Gi9pTG_M&t=659s)

|

||||

|

||||

1. CN

|

||||

|

||||

1. Demo Video: [MetaGPT:一行代码搭建你的虚拟公司_哔哩哔哩_bilibili](https://www.bilibili.com/video/BV1NP411C7GW/?spm_id_from=333.999.0.0&vd_source=735773c218b47da1b4bd1b98a33c5c77)

|

||||

1. Tutorial: [一个提示词写游戏 Flappy bird, 比AutoGPT强10倍的MetaGPT,最接近AGI的AI项目](https://youtu.be/Bp95b8yIH5c)

|

||||

1. Author's thoughts video(CN): [MetaGPT作者深度解析直播回放_哔哩哔哩_bilibili](https://www.bilibili.com/video/BV1Ru411V7XL/?spm_id_from=333.337.search-card.all.click)

|

||||

|

||||

<!---->

|

||||

|

||||

3. ### How to become a contributor?

|

||||

|

||||

<!---->

|

||||

|

||||

1. Choose a task from the Roadmap (or you can propose one). By submitting a PR, you can become a contributor and join the dev team.

|

||||

1. Current contributors come from backgrounds including: ByteDance AI Lab/DingDong/Didi/Xiaohongshu, Tencent/Baidu/MSRA/TikTok/BloomGPT Infra/Bilibili/CUHK/HKUST/CMU/UCB

|

||||

|

||||

<!---->

|

||||

|

||||

4. ### Chief Evangelist (Monthly Rotation)

|

||||

|

||||

MetaGPT Community - The position of Chief Evangelist rotates on a monthly basis. The primary responsibilities include:

|

||||

|

||||

1. Maintaining community FAQ documents, announcements, Github resources/READMEs.

|

||||

1. Responding to, answering, and distributing community questions within an average of 30 minutes, including on platforms like Github Issues, Discord and WeChat.

|

||||

1. Upholding a community atmosphere that is enthusiastic, genuine, and friendly.

|

||||

1. Encouraging everyone to become contributors and participate in projects that are closely related to achieving AGI (Artificial General Intelligence).

|

||||

1. (Optional) Organizing small-scale events, such as hackathons.

|

||||

|

||||

<!---->

|

||||

|

||||

5. ### FAQ

|

||||

|

||||

<!---->

|

||||

|

||||

1. Experience with the generated repo code:

|

||||

|

||||

1. https://github.com/geekan/MetaGPT/releases/tag/v0.1.0

|

||||

|

||||

1. Code truncation/ Parsing failure:

|

||||

|

||||

1. Check if it's due to exceeding length. Consider using the gpt-3.5-turbo-16k or other long token versions.

|

||||

|

||||

1. Success rate:

|

||||

|

||||

1. There hasn't been a quantitative analysis yet, but the success rate of code generated by GPT-4 is significantly higher than that of gpt-3.5-turbo.

|

||||

|

||||

1. Support for incremental, differential updates (if you wish to continue a half-done task):

|

||||

|

||||

1. Several prerequisite tasks are listed on the ROADMAP.

|

||||

|

||||

1. Can existing code be loaded?

|

||||

|

||||

1. It's not on the ROADMAP yet, but there are plans in place. It just requires some time.

|

||||

|

||||

1. Support for multiple programming languages and natural languages?

|

||||

|

||||

1. It's listed on ROADMAP.

|

||||

|

||||

1. Want to join the contributor team? How to proceed?

|

||||

|

||||

1. Merging a PR will get you into the contributor's team. The main ongoing tasks are all listed on the ROADMAP.

|

||||

|

||||

1. PRD stuck / unable to access/ connection interrupted

|

||||

|

||||

1. The official OPENAI_API_BASE address is `https://api.openai.com/v1`

|

||||

1. If the official OPENAI_API_BASE address is inaccessible in your environment (this can be verified with curl), it's recommended to configure using the reverse proxy OPENAI_API_BASE provided by libraries such as openai-forward. For instance, `OPENAI_API_BASE: "``https://api.openai-forward.com/v1``"`

|

||||

1. If the official OPENAI_API_BASE address is inaccessible in your environment (again, verifiable via curl), another option is to configure the OPENAI_PROXY parameter. This way, you can access the official OPENAI_API_BASE via a local proxy. If you don't need to access via a proxy, please do not enable this configuration; if accessing through a proxy is required, modify it to the correct proxy address. Note that when OPENAI_PROXY is enabled, don't set OPENAI_API_BASE.

|

||||

1. Note: OpenAI's default API design ends with a v1. An example of the correct configuration is: `OPENAI_API_BASE: "``https://api.openai.com/v1``"`

|

||||

|

||||

1. Absolutely! How can I assist you today?

|

||||

|

||||

1. Did you use Chi or a similar service? These services are prone to errors, and it seems that the error rate is higher when consuming 3.5k-4k tokens in GPT-4

|

||||

|

||||

1. What does Max token mean?

|

||||

|

||||

1. It's a configuration for OpenAI's maximum response length. If the response exceeds the max token, it will be truncated.

|

||||

|

||||

1. How to change the investment amount?

|

||||

|

||||

1. You can view all commands by typing `python startup.py --help`

|

||||

|

||||

1. Which version of Python is more stable?

|

||||

|

||||

1. python3.9 / python3.10

|

||||

|

||||

1. Can't use GPT-4, getting the error "The model gpt-4 does not exist."

|

||||

|

||||

1. OpenAI's official requirement: You can use GPT-4 only after spending $1 on OpenAI.

|

||||

1. Tip: Run some data with gpt-3.5-turbo (consume the free quota and $1), and then you should be able to use gpt-4.

|

||||

|

||||

1. Can games whose code has never been seen before be written?

|

||||

|

||||

1. Refer to the README. The recommendation system of Toutiao is one of the most complex systems in the world currently. Although it's not on GitHub, many discussions about it exist online. If it can visualize these, it suggests it can also summarize these discussions and convert them into code. The prompt would be something like "write a recommendation system similar to Toutiao". Note: this was approached in earlier versions of the software. The SOP of those versions was different; the current one adopts Elon Musk's five-step work method, emphasizing trimming down requirements as much as possible.

|

||||

|

||||

1. Under what circumstances would there typically be errors?

|

||||

|

||||

1. More than 500 lines of code: some function implementations may be left blank.

|

||||

1. When using a database, it often gets the implementation wrong — since the SQL database initialization process is usually not in the code.

|

||||

1. With more lines of code, there's a higher chance of false impressions, leading to calls to non-existent APIs.

|

||||

|

||||

1. Instructions for using SD Skills/UI Role:

|

||||

|

||||

1. Currently, there is a test script located in /tests/metagpt/roles. The file ui_role provides the corresponding code implementation. For testing, you can refer to the test_ui in the same directory.

|

||||

|

||||

1. The UI role takes over from the product manager role, extending the output from the 【UI Design draft】 provided by the product manager role. The UI role has implemented the UIDesign Action. Within the run of UIDesign, it processes the respective context, and based on the set template, outputs the UI. The output from the UI role includes:

|

||||

|

||||

1. UI Design Description:Describes the content to be designed and the design objectives.

|

||||

1. Selected Elements:Describes the elements in the design that need to be illustrated.

|

||||

1. HTML Layout:Outputs the HTML code for the page.

|

||||

1. CSS Styles (styles.css):Outputs the CSS code for the page.

|

||||

|

||||

1. Currently, the SD skill is a tool invoked by UIDesign. It instantiates the SDEngine, with specific code found in metagpt/tools/sd_engine.

|

||||

|

||||

1. Configuration instructions for SD Skills: The SD interface is currently deployed based on *https://github.com/AUTOMATIC1111/stable-diffusion-webui* **For environmental configurations and model downloads, please refer to the aforementioned GitHub repository. To initiate the SD service that supports API calls, run the command specified in cmd with the parameter nowebui, i.e.,

|

||||

|

||||

1. > python webui.py --enable-insecure-extension-access --port xxx --no-gradio-queue --nowebui

|

||||

1. Once it runs without errors, the interface will be accessible after approximately 1 minute when the model finishes loading.

|

||||

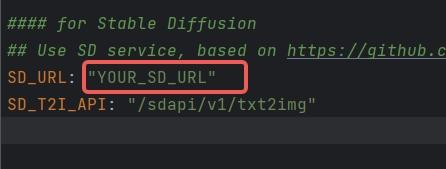

1. Configure SD_URL and SD_T2I_API in the config.yaml/key.yaml files.

|

||||

1.

|

||||

1. SD_URL is the deployed server/machine IP, and Port is the specified port above, defaulting to 7860.

|

||||

1. > SD_URL: IP:Port

|

||||

|

||||

1. An error occurred during installation: "Another program is using this file...egg".

|

||||

|

||||

1. Delete the file and try again.

|

||||

1. Or manually execute`pip install -r requirements.txt`

|

||||

|

||||

1. The origin of the name MetaGPT?

|

||||

|

||||

1. The name was derived after iterating with GPT-4 over a dozen rounds. GPT-4 scored and suggested it.

|

||||

|

||||

1. Is there a more step-by-step installation tutorial?

|

||||

|

||||

1. Youtube(CN):[一个提示词写游戏 Flappy bird, 比AutoGPT强10倍的MetaGPT,最接近AGI的AI项目=一个软件公司产品经理+程序员](https://youtu.be/Bp95b8yIH5c)

|

||||

1. Youtube(EN)https://www.youtube.com/watch?v=q16Gi9pTG_M&t=659s

|

||||

|

||||

1. openai.error.RateLimitError: You exceeded your current quota, please check your plan and billing details

|

||||

|

||||

1. If you haven't exhausted your free quota, set RPM to 3 or lower in the settings.

|

||||

1. If your free quota is used up, consider adding funds to your account.

|

||||

|

||||

1. What does "borg" mean in n_borg?

|

||||

|

||||

1. https://en.wikipedia.org/wiki/Borg

|

||||

1. The Borg civilization operates based on a hive or collective mentality, known as "the Collective." Every Borg individual is connected to the collective via a sophisticated subspace network, ensuring continuous oversight and guidance for every member. This collective consciousness allows them to not only "share the same thoughts" but also to adapt swiftly to new strategies. While individual members of the collective rarely communicate, the collective "voice" sometimes transmits aboard ships.

|

||||

|

||||

1. How to use the Claude API?

|

||||

|

||||

1. The full implementation of the Claude API is not provided in the current code.

|

||||

1. You can use the Claude API through third-party API conversion projects like: https://github.com/jtsang4/claude-to-chatgpt

|

||||

|

||||

1. Is Llama2 supported?

|

||||

|

||||

1. On the day Llama2 was released, some of the community members began experiments and found that output can be generated based on MetaGPT's structure. However, Llama2's context is too short to generate a complete project. Before regularly using Llama2, it's necessary to expand the context window to at least 8k. If anyone has good recommendations for expansion models or methods, please leave a comment.

|

||||

|

||||

1. `mermaid-cli getElementsByTagName SyntaxError: Unexpected token '.'`

|

||||

|

||||

1. Upgrade node to version 14.x or above:

|

||||

|

||||

1. `npm install -g n`

|

||||

1. `n stable` to install the stable version of node(v18.x)

|

||||

|

|

@ -33,7 +33,7 @@ ## Examples (fully generated by GPT-4)

|

|||

|

||||

|

||||

|

||||

It requires around **$0.2** (GPT-4 api's costs) to generate one example with analysis and design, around **$2.0** to a full project.

|

||||

It costs approximately **$0.2** (in GPT-4 API fees) to generate one example with analysis and design, and around **$2.0** for a full project.

|

||||

|

||||

## Installation

|

||||

|

||||

|

|

|

|||

|

|

@ -65,4 +65,8 @@ SD_T2I_API: "/sdapi/v1/txt2img"

|

|||

|

||||

### for update_costs & calc_usage

|

||||

UPDATE_COSTS: false

|

||||

CALC_USAGE: false

|

||||

CALC_USAGE: false

|

||||

|

||||

### for Research

|

||||

MODEL_FOR_RESEARCHER_SUMMARY: gpt-3.5-turbo

|

||||

MODEL_FOR_RESEARCHER_REPORT: gpt-3.5-turbo-16k

|

||||

|

|

|

|||

16

examples/research.py

Normal file

16

examples/research.py

Normal file

|

|

@ -0,0 +1,16 @@

|

|||

#!/usr/bin/env python

|

||||

|

||||

import asyncio

|

||||

|

||||

from metagpt.roles.researcher import RESEARCH_PATH, Researcher

|

||||

|

||||

|

||||

async def main():

|

||||

topic = "dataiku vs. datarobot"

|

||||

role = Researcher(language="en-us")

|

||||

await role.run(topic)

|

||||

print(f"save report to {RESEARCH_PATH / f'{topic}.md'}.")

|

||||

|

||||

|

||||

if __name__ == '__main__':

|

||||

asyncio.run(main())

|

||||

|

|

@ -22,6 +22,7 @@ from metagpt.actions.write_code_review import WriteCodeReview

|

|||

from metagpt.actions.write_prd import WritePRD

|

||||

from metagpt.actions.write_prd_review import WritePRDReview

|

||||

from metagpt.actions.write_test import WriteTest

|

||||

from metagpt.actions.research import CollectLinks, WebBrowseAndSummarize, ConductResearch

|

||||

|

||||

|

||||

class ActionType(Enum):

|

||||

|

|

@ -40,3 +41,6 @@ class ActionType(Enum):

|

|||

WRITE_TASKS = WriteTasks

|

||||

ASSIGN_TASKS = AssignTasks

|

||||

SEARCH_AND_SUMMARIZE = SearchAndSummarize

|

||||

COLLECT_LINKS = CollectLinks

|

||||

WEB_BROWSE_AND_SUMMARIZE = WebBrowseAndSummarize

|

||||

CONDUCT_RESEARCH = ConductResearch

|

||||

|

|

|

|||

277

metagpt/actions/research.py

Normal file

277

metagpt/actions/research.py

Normal file

|

|

@ -0,0 +1,277 @@

|

|||

#!/usr/bin/env python

|

||||

|

||||

from __future__ import annotations

|

||||

|

||||

import asyncio

|

||||

import json

|

||||

from typing import Callable

|

||||

|

||||

from pydantic import parse_obj_as

|

||||

|

||||

from metagpt.actions import Action

|

||||

from metagpt.config import CONFIG

|

||||

from metagpt.logs import logger

|

||||

from metagpt.tools.search_engine import SearchEngine

|

||||

from metagpt.tools.web_browser_engine import WebBrowserEngine, WebBrowserEngineType

|

||||

from metagpt.utils.text import generate_prompt_chunk, reduce_message_length

|

||||

|

||||

LANG_PROMPT = "Please respond in {language}."

|

||||

|

||||

RESEARCH_BASE_SYSTEM = """You are an AI critical thinker research assistant. Your sole purpose is to write well \

|

||||

written, critically acclaimed, objective and structured reports on the given text."""

|

||||

|

||||

RESEARCH_TOPIC_SYSTEM = "You are an AI researcher assistant, and your research topic is:\n#TOPIC#\n{topic}"

|

||||

|

||||

SEARCH_TOPIC_PROMPT = """Please provide up to 2 necessary keywords related to your research topic for Google search. \

|

||||

Your response must be in JSON format, for example: ["keyword1", "keyword2"]."""

|

||||

|

||||

SUMMARIZE_SEARCH_PROMPT = """### Requirements

|

||||

1. The keywords related to your research topic and the search results are shown in the "Search Result Information" section.

|

||||

2. Provide up to {decomposition_nums} queries related to your research topic base on the search results.

|

||||

3. Please respond in the following JSON format: ["query1", "query2", "query3", ...].

|

||||

|

||||

### Search Result Information

|

||||

{search_results}

|

||||

"""

|

||||

|

||||

COLLECT_AND_RANKURLS_PROMPT = """### Topic

|

||||

{topic}

|

||||

### Query

|

||||

{query}

|

||||

|

||||

### The online search results

|

||||

{results}

|

||||

|

||||

### Requirements

|

||||

Please remove irrelevant search results that are not related to the query or topic. Then, sort the remaining search results \

|

||||

based on the link credibility. If two results have equal credibility, prioritize them based on the relevance. Provide the

|

||||

ranked results' indices in JSON format, like [0, 1, 3, 4, ...], without including other words.

|

||||

"""

|

||||

|

||||

WEB_BROWSE_AND_SUMMARIZE_PROMPT = '''### Requirements

|

||||

1. Utilize the text in the "Reference Information" section to respond to the question "{query}".

|

||||

2. If the question cannot be directly answered using the text, but the text is related to the research topic, please provide \

|

||||

a comprehensive summary of the text.

|

||||

3. If the text is entirely unrelated to the research topic, please reply with a simple text "Not relevant."

|

||||

4. Include all relevant factual information, numbers, statistics, etc., if available.

|

||||

|

||||

### Reference Information

|

||||

{content}

|

||||

'''

|

||||

|

||||

|

||||

CONDUCT_RESEARCH_PROMPT = '''### Reference Information

|

||||

{content}

|

||||

|

||||

### Requirements

|

||||

Please provide a detailed research report in response to the following topic: "{topic}", using the information provided \

|

||||

above. The report must meet the following requirements:

|

||||

|

||||

- Focus on directly addressing the chosen topic.

|

||||

- Ensure a well-structured and in-depth presentation, incorporating relevant facts and figures where available.

|

||||

- Present data and findings in an intuitive manner, utilizing feature comparative tables, if applicable.

|

||||

- The report should have a minimum word count of 2,000 and be formatted with Markdown syntax following APA style guidelines.

|

||||

- Include all source URLs in APA format at the end of the report.

|

||||

'''

|

||||

|

||||

|

||||

class CollectLinks(Action):

|

||||

"""Action class to collect links from a search engine."""

|

||||

def __init__(

|

||||

self,

|

||||

name: str = "",

|

||||

*args,

|

||||

rank_func: Callable[[list[str]], None] | None = None,

|

||||

**kwargs,

|

||||

):

|

||||

super().__init__(name, *args, **kwargs)

|

||||

self.desc = "Collect links from a search engine."

|

||||

self.search_engine = SearchEngine()

|

||||

self.rank_func = rank_func

|

||||

|

||||

async def run(

|

||||

self,

|

||||

topic: str,

|

||||

decomposition_nums: int = 4,

|

||||

url_per_query: int = 4,

|

||||

system_text: str | None = None,

|

||||

) -> dict[str, list[str]]:

|

||||

"""Run the action to collect links.

|

||||

|

||||

Args:

|

||||

topic: The research topic.

|

||||

decomposition_nums: The number of search questions to generate.

|

||||

url_per_query: The number of URLs to collect per search question.

|

||||

system_text: The system text.

|

||||

|

||||

Returns:

|

||||

A dictionary containing the search questions as keys and the collected URLs as values.

|

||||

"""

|

||||

system_text = system_text if system_text else RESEARCH_TOPIC_SYSTEM.format(topic=topic)

|

||||

keywords = await self._aask(SEARCH_TOPIC_PROMPT, [system_text])

|

||||

try:

|

||||

keywords = json.loads(keywords)

|

||||

keywords = parse_obj_as(list[str], keywords)

|

||||

except Exception as e:

|

||||

logger.exception(f"fail to get keywords related to the research topic \"{topic}\" for {e}")

|

||||

keywords = [topic]

|

||||

results = await asyncio.gather(*(self.search_engine.run(i, as_string=False) for i in keywords))

|

||||

|

||||

def gen_msg():

|

||||

while True:

|

||||

search_results = "\n".join(f"#### Keyword: {i}\n Search Result: {j}\n" for (i, j) in zip(keywords, results))

|

||||

prompt = SUMMARIZE_SEARCH_PROMPT.format(decomposition_nums=decomposition_nums, search_results=search_results)

|

||||

yield prompt

|

||||

remove = max(results, key=len)

|

||||

remove.pop()

|

||||

if len(remove) == 0:

|

||||

break

|

||||

prompt = reduce_message_length(gen_msg(), self.llm.model, system_text, CONFIG.max_tokens_rsp)

|

||||

logger.debug(prompt)

|

||||

queries = await self._aask(prompt, [system_text])

|

||||

try:

|

||||

queries = json.loads(queries)

|

||||

queries = parse_obj_as(list[str], queries)

|

||||

except Exception as e:

|

||||

logger.exception(f"fail to break down the research question due to {e}")

|

||||

queries = keywords

|

||||

ret = {}

|

||||

for query in queries:

|

||||

ret[query] = await self._search_and_rank_urls(topic, query, url_per_query)

|

||||

return ret

|

||||

|

||||

async def _search_and_rank_urls(self, topic: str, query: str, num_results: int = 4) -> list[str]:

|

||||

"""Search and rank URLs based on a query.

|

||||

|

||||

Args:

|

||||

topic: The research topic.

|

||||

query: The search query.

|

||||

num_results: The number of URLs to collect.

|

||||

|

||||

Returns:

|

||||

A list of ranked URLs.

|

||||

"""

|

||||

max_results = max(num_results * 2, 6)

|

||||

results = await self.search_engine.run(query, max_results=max_results, as_string=False)

|

||||

_results = "\n".join(f"{i}: {j}" for i, j in zip(range(max_results), results))

|

||||

prompt = COLLECT_AND_RANKURLS_PROMPT.format(topic=topic, query=query, results=_results)

|

||||

logger.debug(prompt)

|

||||

indices = await self._aask(prompt)

|

||||

try:

|

||||

indices = json.loads(indices)

|

||||

assert all(isinstance(i, int) for i in indices)

|

||||

except Exception as e:

|

||||

logger.exception(f"fail to rank results for {e}")

|

||||

indices = list(range(max_results))

|

||||

results = [results[i] for i in indices]

|

||||

if self.rank_func:

|

||||

results = self.rank_func(results)

|

||||

return [i["link"] for i in results[:num_results]]

|

||||

|

||||

|

||||

class WebBrowseAndSummarize(Action):

|

||||

"""Action class to explore the web and provide summaries of articles and webpages."""

|

||||

def __init__(

|

||||

self,

|

||||

*args,

|

||||

browse_func: Callable[[list[str]], None] | None = None,

|

||||

**kwargs,

|

||||

):

|

||||

super().__init__(*args, **kwargs)

|

||||

if CONFIG.model_for_researcher_summary:

|

||||

self.llm.model = CONFIG.model_for_researcher_summary

|

||||

self.web_browser_engine = WebBrowserEngine(

|

||||

engine=WebBrowserEngineType.CUSTOM if browse_func else None,

|

||||

run_func=browse_func,

|

||||

)

|

||||

self.desc = "Explore the web and provide summaries of articles and webpages."

|

||||

|

||||

async def run(

|

||||

self,

|

||||

url: str,

|

||||

*urls: str,

|

||||

query: str,

|

||||

system_text: str = RESEARCH_BASE_SYSTEM,

|

||||

) -> dict[str, str]:

|

||||

"""Run the action to browse the web and provide summaries.

|

||||

|

||||

Args:

|

||||

url: The main URL to browse.

|

||||

urls: Additional URLs to browse.

|

||||

query: The research question.

|

||||

system_text: The system text.

|

||||

|

||||

Returns:

|

||||

A dictionary containing the URLs as keys and their summaries as values.

|

||||

"""

|

||||

contents = await self.web_browser_engine.run(url, *urls)

|

||||

if not urls:

|

||||

contents = [contents]

|

||||

|

||||

summaries = {}

|

||||

prompt_template = WEB_BROWSE_AND_SUMMARIZE_PROMPT.format(query=query, content="{}")

|

||||

for u, content in zip([url, *urls], contents):

|

||||

content = content.inner_text

|

||||

chunk_summaries = []

|

||||

for prompt in generate_prompt_chunk(content, prompt_template, self.llm.model, system_text, CONFIG.max_tokens_rsp):

|

||||

logger.debug(prompt)

|

||||

summary = await self._aask(prompt, [system_text])

|

||||

if summary == "Not relevant.":

|

||||

continue

|

||||

chunk_summaries.append(summary)

|

||||

|

||||

if not chunk_summaries:

|

||||

summaries[u] = None

|

||||

continue

|

||||

|

||||

if len(chunk_summaries) == 1:

|

||||

summaries[u] = chunk_summaries[0]

|

||||

continue

|

||||

|

||||

content = "\n".join(chunk_summaries)

|

||||

prompt = WEB_BROWSE_AND_SUMMARIZE_PROMPT.format(query=query, content=content)

|

||||

summary = await self._aask(prompt, [system_text])

|

||||

summaries[u] = summary

|

||||

return summaries

|

||||

|

||||

|

||||

class ConductResearch(Action):

|

||||

"""Action class to conduct research and generate a research report."""

|

||||

def __init__(self, *args, **kwargs):

|

||||

super().__init__(*args, **kwargs)

|

||||

if CONFIG.model_for_researcher_report:

|

||||

self.llm.model = CONFIG.model_for_researcher_report

|

||||

|

||||

async def run(

|

||||

self,

|

||||

topic: str,

|

||||

content: str,

|

||||

system_text: str = RESEARCH_BASE_SYSTEM,

|

||||

) -> str:

|

||||

"""Run the action to conduct research and generate a research report.

|

||||

|

||||

Args:

|

||||

topic: The research topic.

|

||||

content: The content for research.

|

||||

system_text: The system text.

|

||||

|

||||

Returns:

|

||||

The generated research report.

|

||||

"""

|

||||

prompt = CONDUCT_RESEARCH_PROMPT.format(topic=topic, content=content)

|

||||

logger.debug(prompt)

|

||||

self.llm.auto_max_tokens = True

|

||||

return await self._aask(prompt, [system_text])

|

||||

|

||||

|

||||

def get_research_system_text(topic: str, language: str):

|

||||

"""Get the system text for conducting research.

|

||||

|

||||

Args:

|

||||

topic: The research topic.

|

||||

language: The language for the system text.

|

||||

|

||||

Returns:

|

||||

The system text for conducting research.

|

||||

"""

|

||||

return " ".join((RESEARCH_TOPIC_SYSTEM.format(topic=topic), LANG_PROMPT.format(language=language)))

|

||||

|

|

@ -27,7 +27,7 @@ Please summarize the cause of the errors and give correction instruction

|

|||

Determine the ONE file to rewrite in order to fix the error, for example, xyz.py, or test_xyz.py

|

||||

## Status:

|

||||

Determine if all of the code works fine, if so write PASS, else FAIL,

|

||||

WRITE ONLY ONE WORD, PASS OR FAIL, IN THI SECTION

|

||||

WRITE ONLY ONE WORD, PASS OR FAIL, IN THIS SECTION

|

||||

## Send To:

|

||||

Please write Engineer if the errors are due to problematic development codes, and QaEngineer to problematic test codes, and NoOne if there are no errors,

|

||||

WRITE ONLY ONE WORD, Engineer OR QaEngineer OR NoOne, IN THIS SECTION.

|

||||

|

|

|

|||

|

|

@ -4,14 +4,14 @@

|

|||

提供配置,单例

|

||||

"""

|

||||

import os

|

||||

import openai

|

||||

|

||||

import openai

|

||||

import yaml

|

||||

|

||||

from metagpt.const import PROJECT_ROOT

|

||||

from metagpt.logs import logger

|

||||

from metagpt.utils.singleton import Singleton

|

||||

from metagpt.tools import SearchEngineType, WebBrowserEngineType

|

||||

from metagpt.utils.singleton import Singleton

|

||||

|

||||

|

||||

class NotConfiguredException(Exception):

|

||||

|

|

@ -46,7 +46,6 @@ class Config(metaclass=Singleton):

|

|||

self.openai_api_key = self._get("OPENAI_API_KEY")

|

||||

if not self.openai_api_key or "YOUR_API_KEY" == self.openai_api_key:

|

||||

raise NotConfiguredException("Set OPENAI_API_KEY first")

|

||||

|

||||

self.openai_api_base = self._get("OPENAI_API_BASE")

|

||||

if not self.openai_api_base or "YOUR_API_BASE" == self.openai_api_base:

|

||||

openai_proxy = self._get("OPENAI_PROXY") or self.global_proxy

|

||||

|

|

@ -67,22 +66,22 @@ class Config(metaclass=Singleton):

|

|||

self.google_api_key = self._get("GOOGLE_API_KEY")

|

||||

self.google_cse_id = self._get("GOOGLE_CSE_ID")

|

||||

self.search_engine = self._get("SEARCH_ENGINE", SearchEngineType.SERPAPI_GOOGLE)

|

||||

|

||||

|

||||

self.web_browser_engine = WebBrowserEngineType(self._get("WEB_BROWSER_ENGINE", "playwright"))

|

||||

self.playwright_browser_type = self._get("PLAYWRIGHT_BROWSER_TYPE", "chromium")

|

||||

self.selenium_browser_type = self._get("SELENIUM_BROWSER_TYPE", "chrome")

|

||||

|

||||

|

||||

self.long_term_memory = self._get('LONG_TERM_MEMORY', False)

|

||||

if self.long_term_memory:

|

||||

logger.warning("LONG_TERM_MEMORY is True")

|

||||

self.max_budget = self._get("MAX_BUDGET", 10.0)

|

||||

self.total_cost = 0.0

|

||||

self.puppeteer_config = self._get("PUPPETEER_CONFIG","")

|

||||

self.mmdc = self._get("MMDC","mmdc")

|

||||

self.update_costs = self._get("UPDATE_COSTS",True)

|

||||

self.calc_usage = self._get("CALC_USAGE",True)

|

||||

|

||||

|

||||

self.puppeteer_config = self._get("PUPPETEER_CONFIG", "")

|

||||

self.mmdc = self._get("MMDC", "mmdc")

|

||||

self.update_costs = self._get("UPDATE_COSTS", True)

|

||||

self.calc_usage = self._get("CALC_USAGE", True)

|

||||

self.model_for_researcher_summary = self._get("MODEL_FOR_RESEARCHER_SUMMARY")

|

||||

self.model_for_researcher_report = self._get("MODEL_FOR_RESEARCHER_REPORT")

|

||||

|

||||

def _init_with_config_files_and_env(self, configs: dict, yaml_file):

|

||||

"""从config/key.yaml / config/config.yaml / env三处按优先级递减加载"""

|

||||

|

|

|

|||

|

|

@ -32,5 +32,6 @@ UT_PY_PATH = UT_PATH / "files/ut/"

|

|||

API_QUESTIONS_PATH = UT_PATH / "files/question/"

|

||||

YAPI_URL = "http://yapi.deepwisdomai.com/"

|

||||

TMP = PROJECT_ROOT / 'tmp'

|

||||

RESEARCH_PATH = DATA_PATH / "research"

|

||||

|

||||

MEM_TTL = 24 * 30 * 3600

|

||||

|

|

|

|||

|

|

@ -1,4 +1,3 @@

|

|||

#!/usr/bin/env python

|

||||

# -*- coding: utf-8 -*-

|

||||

"""

|

||||

@Time : 2023/5/5 23:08

|

||||

|

|

@ -7,10 +6,11 @@

|

|||

"""

|

||||

import asyncio

|

||||

import time

|

||||

from functools import wraps

|

||||

from typing import NamedTuple

|

||||

|

||||

import openai

|

||||

from openai.error import APIConnectionError

|

||||

from tenacity import retry, stop_after_attempt, after_log, wait_fixed, retry_if_exception_type

|

||||

|

||||

from metagpt.config import CONFIG

|

||||

from metagpt.logs import logger

|

||||

|

|

@ -20,33 +20,22 @@ from metagpt.utils.token_counter import (

|

|||

TOKEN_COSTS,

|

||||

count_message_tokens,

|

||||

count_string_tokens,

|

||||

get_max_completion_tokens,

|

||||

)

|

||||

|

||||

|

||||

def retry(max_retries):

|

||||

def decorator(f):

|

||||

@wraps(f)

|

||||

async def wrapper(*args, **kwargs):

|

||||

for i in range(max_retries):

|

||||

try:

|

||||

return await f(*args, **kwargs)

|

||||

except Exception:

|

||||

if i == max_retries - 1:

|

||||

raise

|

||||

await asyncio.sleep(2 ** i)

|

||||

return wrapper

|

||||

return decorator

|

||||

|

||||

|

||||

class RateLimiter:

|

||||

"""Rate control class, each call goes through wait_if_needed, sleep if rate control is needed"""

|

||||

|

||||

def __init__(self, rpm):

|

||||

self.last_call_time = 0

|

||||

self.interval = 1.1 * 60 / rpm # Here 1.1 is used because even if the calls are made strictly according to time, they will still be QOS'd; consider switching to simple error retry later

|

||||

# Here 1.1 is used because even if the calls are made strictly according to time,

|

||||

# they will still be QOS'd; consider switching to simple error retry later

|

||||

self.interval = 1.1 * 60 / rpm

|

||||

self.rpm = rpm

|

||||

|

||||

def split_batches(self, batch):

|

||||

return [batch[i:i + self.rpm] for i in range(0, len(batch), self.rpm)]

|

||||

return [batch[i : i + self.rpm] for i in range(0, len(batch), self.rpm)]

|

||||

|

||||

async def wait_if_needed(self, num_requests):

|

||||

current_time = time.time()

|

||||

|

|

@ -69,6 +58,7 @@ class Costs(NamedTuple):

|

|||

|

||||

class CostManager(metaclass=Singleton):

|

||||

"""计算使用接口的开销"""

|

||||

|

||||

def __init__(self):

|

||||

self.total_prompt_tokens = 0

|

||||

self.total_completion_tokens = 0

|

||||

|

|

@ -86,13 +76,12 @@ class CostManager(metaclass=Singleton):

|

|||

"""

|

||||

self.total_prompt_tokens += prompt_tokens

|

||||

self.total_completion_tokens += completion_tokens

|

||||

cost = (

|

||||

prompt_tokens * TOKEN_COSTS[model]["prompt"]

|

||||

+ completion_tokens * TOKEN_COSTS[model]["completion"]

|

||||

) / 1000

|

||||

cost = (prompt_tokens * TOKEN_COSTS[model]["prompt"] + completion_tokens * TOKEN_COSTS[model]["completion"]) / 1000

|

||||

self.total_cost += cost

|

||||

logger.info(f"Total running cost: ${self.total_cost:.3f} | Max budget: ${CONFIG.max_budget:.3f} | "

|

||||

f"Current cost: ${cost:.3f}, {prompt_tokens=}, {completion_tokens=}")

|

||||

logger.info(

|

||||

f"Total running cost: ${self.total_cost:.3f} | Max budget: ${CONFIG.max_budget:.3f} | "

|

||||

f"Current cost: ${cost:.3f}, prompt_tokens: {prompt_tokens}, completion_tokens: {completion_tokens}"

|

||||

)

|

||||

CONFIG.total_cost = self.total_cost

|

||||

|

||||

def get_total_prompt_tokens(self):

|

||||

|

|

@ -127,14 +116,25 @@ class CostManager(metaclass=Singleton):

|

|||

return Costs(self.total_prompt_tokens, self.total_completion_tokens, self.total_cost, self.total_budget)

|

||||

|

||||

|

||||

def log_and_reraise(retry_state):

|

||||

logger.error(f"Retry attempts exhausted. Last exception: {retry_state.outcome.exception()}")

|

||||

logger.warning("""

|

||||

Recommend going to https://deepwisdom.feishu.cn/wiki/MsGnwQBjiif9c3koSJNcYaoSnu4#part-XdatdVlhEojeAfxaaEZcMV3ZniQ

|

||||

See FAQ 5.8

|

||||

""")

|

||||

raise retry_state.outcome.exception()

|

||||

|

||||

|

||||

class OpenAIGPTAPI(BaseGPTAPI, RateLimiter):

|

||||

"""

|

||||

Check https://platform.openai.com/examples for examples

|

||||

"""

|

||||

|

||||

def __init__(self):

|

||||

self.__init_openai(CONFIG)

|

||||

self.llm = openai

|

||||

self.model = CONFIG.openai_api_model

|

||||

self.auto_max_tokens = False

|

||||

self._cost_manager = CostManager()

|

||||

RateLimiter.__init__(self, rpm=self.rpm)

|

||||

|

||||

|

|

@ -148,10 +148,7 @@ class OpenAIGPTAPI(BaseGPTAPI, RateLimiter):

|

|||

self.rpm = int(config.get("RPM", 10))

|

||||

|

||||

async def _achat_completion_stream(self, messages: list[dict]) -> str:

|

||||

response = await openai.ChatCompletion.acreate(

|

||||

**self._cons_kwargs(messages),

|

||||

stream=True

|

||||

)

|

||||

response = await openai.ChatCompletion.acreate(**self._cons_kwargs(messages), stream=True)

|

||||

|

||||

# create variables to collect the stream of chunks

|

||||

collected_chunks = []

|

||||

|

|

@ -159,41 +156,42 @@ class OpenAIGPTAPI(BaseGPTAPI, RateLimiter):

|

|||

# iterate through the stream of events

|

||||

async for chunk in response:

|

||||

collected_chunks.append(chunk) # save the event response

|

||||

chunk_message = chunk['choices'][0]['delta'] # extract the message

|

||||

chunk_message = chunk["choices"][0]["delta"] # extract the message

|

||||

collected_messages.append(chunk_message) # save the message

|

||||

if "content" in chunk_message:

|

||||

print(chunk_message["content"], end="")

|

||||

print()

|

||||

|

||||

full_reply_content = ''.join([m.get('content', '') for m in collected_messages])

|

||||

full_reply_content = "".join([m.get("content", "") for m in collected_messages])

|

||||

usage = self._calc_usage(messages, full_reply_content)

|

||||

self._update_costs(usage)

|

||||

return full_reply_content

|

||||

|

||||

def _cons_kwargs(self, messages: list[dict]) -> dict:

|

||||

if CONFIG.openai_api_type == 'azure':

|

||||

if CONFIG.openai_api_type == "azure":

|

||||

kwargs = {

|

||||

"deployment_id": CONFIG.deployment_id,

|

||||

"messages": messages,

|

||||

"max_tokens": CONFIG.max_tokens_rsp,

|

||||

"max_tokens": self.get_max_tokens(messages),

|

||||

"n": 1,

|

||||

"stop": None,

|

||||

"temperature": 0.3

|

||||

"temperature": 0.3,

|

||||

}

|

||||

else:

|

||||

kwargs = {

|

||||

"model": self.model,

|

||||

"messages": messages,

|

||||

"max_tokens": CONFIG.max_tokens_rsp,

|

||||

"max_tokens": self.get_max_tokens(messages),

|

||||

"n": 1,

|

||||

"stop": None,

|

||||

"temperature": 0.3

|

||||

"temperature": 0.3,

|

||||

}

|

||||

kwargs["timeout"] = 3

|

||||

return kwargs

|

||||

|

||||

async def _achat_completion(self, messages: list[dict]) -> dict:

|

||||

rsp = await self.llm.ChatCompletion.acreate(**self._cons_kwargs(messages))

|

||||

self._update_costs(rsp.get('usage'))

|

||||

self._update_costs(rsp.get("usage"))

|

||||

return rsp

|

||||

|

||||

def _chat_completion(self, messages: list[dict]) -> dict:

|

||||

|

|

@ -211,7 +209,13 @@ class OpenAIGPTAPI(BaseGPTAPI, RateLimiter):

|

|||

# messages = self.messages_to_dict(messages)

|

||||

return await self._achat_completion(messages)

|

||||

|

||||

@retry(max_retries=6)

|

||||

@retry(

|

||||

stop=stop_after_attempt(3),

|

||||

wait=wait_fixed(1),

|

||||

after=after_log(logger, logger.level('WARNING').name),

|

||||

retry=retry_if_exception_type(APIConnectionError),

|

||||

retry_error_callback=log_and_reraise,

|

||||

)

|

||||

async def acompletion_text(self, messages: list[dict], stream=False) -> str:

|

||||

"""when streaming, print each token in place."""

|

||||

if stream:

|

||||

|

|

@ -262,3 +266,8 @@ class OpenAIGPTAPI(BaseGPTAPI, RateLimiter):

|

|||

|

||||

def get_costs(self) -> Costs:

|

||||

return self._cost_manager.get_costs()

|

||||

|

||||

def get_max_tokens(self, messages: list[dict]):

|

||||

if not self.auto_max_tokens:

|

||||

return CONFIG.max_tokens_rsp

|

||||

return get_max_completion_tokens(messages, self.model, CONFIG.max_tokens_rsp)

|

||||

|

|

|

|||

93

metagpt/roles/researcher.py

Normal file

93

metagpt/roles/researcher.py

Normal file

|

|

@ -0,0 +1,93 @@

|

|||

#!/usr/bin/env python

|

||||

|

||||

import asyncio

|

||||

|

||||

from pydantic import BaseModel

|

||||

|

||||

from metagpt.actions import CollectLinks, ConductResearch, WebBrowseAndSummarize

|

||||

from metagpt.actions.research import get_research_system_text

|

||||

from metagpt.const import RESEARCH_PATH

|

||||

from metagpt.logs import logger

|

||||

from metagpt.roles import Role

|

||||

from metagpt.schema import Message

|

||||

|

||||

|

||||

class Report(BaseModel):

|

||||

topic: str

|

||||

links: dict[str, list[str]] = None

|

||||

summaries: list[tuple[str, str]] = None

|

||||

content: str = ""

|

||||

|

||||

|

||||

class Researcher(Role):

|

||||

def __init__(

|

||||

self,

|

||||

name: str = "David",

|

||||

profile: str = "Researcher",

|

||||

goal: str = "Gather information and conduct research",

|

||||

constraints: str = "Ensure accuracy and relevance of information",

|

||||

language: str = "en-us",

|

||||

**kwargs,

|

||||

):

|

||||

super().__init__(name, profile, goal, constraints, **kwargs)

|

||||

self._init_actions([CollectLinks(name), WebBrowseAndSummarize(name), ConductResearch(name)])

|

||||

self.language = language

|

||||

if language not in ("en-us", "zh-cn"):

|

||||

logger.warning(f"The language `{language}` has not been tested, it may not work.")

|

||||

|

||||

async def _think(self) -> None:

|

||||

if self._rc.todo is None:

|

||||

self._set_state(0)

|

||||

return

|

||||

|

||||

if self._rc.state + 1 < len(self._states):

|

||||

self._set_state(self._rc.state + 1)

|

||||

else:

|

||||

self._rc.todo = None

|

||||

|

||||

async def _act(self) -> Message:

|

||||

logger.info(f"{self._setting}: ready to {self._rc.todo}")

|

||||

todo = self._rc.todo

|

||||

msg = self._rc.memory.get(k=1)[0]

|

||||

if isinstance(msg.instruct_content, Report):

|

||||

instruct_content = msg.instruct_content

|

||||

topic = instruct_content.topic

|

||||

else:

|

||||

topic = msg.content

|

||||

|

||||

research_system_text = get_research_system_text(topic, self.language)

|

||||

if isinstance(todo, CollectLinks):

|

||||

links = await todo.run(topic, 4, 4)

|

||||

ret = Message("", Report(topic=topic, links=links), role=self.profile, cause_by=type(todo))

|

||||

elif isinstance(todo, WebBrowseAndSummarize):

|

||||

links = instruct_content.links

|

||||

todos = (todo.run(*url, query=query, system_text=research_system_text) for (query, url) in links.items())

|

||||

summaries = await asyncio.gather(*todos)

|

||||

summaries = list((url, summary) for i in summaries for (url, summary) in i.items() if summary)

|

||||

ret = Message("", Report(topic=topic, summaries=summaries), role=self.profile, cause_by=type(todo))

|

||||

else:

|

||||

summaries = instruct_content.summaries

|

||||

summary_text = "\n---\n".join(f"url: {url}\nsummary: {summary}" for (url, summary) in summaries)

|

||||

content = await self._rc.todo.run(topic, summary_text, system_text=research_system_text)

|

||||

ret = Message("", Report(topic=topic, content=content), role=self.profile, cause_by=type(self._rc.todo))

|

||||

self._rc.memory.add(ret)

|

||||

return ret

|

||||

|

||||

async def _react(self) -> Message:

|

||||

while True:

|

||||

await self._think()

|

||||

if self._rc.todo is None:

|

||||

break

|

||||

msg = await self._act()

|

||||

report = msg.instruct_content

|

||||

self.write_report(report.topic, report.content)

|

||||

return msg

|

||||

|

||||

def write_report(self, topic: str, content: str):

|

||||

filepath = RESEARCH_PATH / f"{topic}.md"

|

||||

filepath.write_text(content)

|

||||

|

||||

|

||||

if __name__ == "__main__":

|

||||

role = Researcher(language="en-us")

|

||||

asyncio.run(role.run("dataiku vs. datarobot"))

|

||||

|

|

@ -14,6 +14,7 @@ class SearchEngineType(Enum):

|

|||

SERPAPI_GOOGLE = auto()

|

||||

DIRECT_GOOGLE = auto()

|

||||

SERPER_GOOGLE = auto()

|

||||

DUCK_DUCK_GO = auto()

|

||||

CUSTOM_ENGINE = auto()

|

||||

|

||||

|

||||

|

|

|

|||

|

|

@ -7,122 +7,76 @@

|

|||

"""

|

||||

from __future__ import annotations

|

||||

|

||||

import json

|

||||

import importlib

|

||||

from typing import Callable, Coroutine, Literal, overload

|

||||

|

||||

from metagpt.config import Config

|

||||

from metagpt.logs import logger

|

||||

from metagpt.tools.search_engine_serpapi import SerpAPIWrapper

|

||||

from metagpt.tools.search_engine_serper import SerperWrapper

|

||||

|

||||

config = Config()

|

||||

from metagpt.config import CONFIG

|

||||

from metagpt.tools import SearchEngineType

|

||||

|

||||

|

||||

class SearchEngine:

|

||||

"""

|

||||

TODO: 合入Google Search 并进行反代

|

||||

注:这里Google需要挂Proxifier或者类似全局代理

|

||||

- DDG: https://pypi.org/project/duckduckgo-search/

|

||||

- GOOGLE: https://programmablesearchengine.google.com/controlpanel/overview?cx=63f9de531d0e24de9

|

||||

"""

|

||||

def __init__(self, engine=None, run_func=None):

|

||||

self.config = Config()

|

||||

self.run_func = run_func

|

||||

self.engine = engine or self.config.search_engine

|

||||

"""Class representing a search engine.

|

||||

|

||||

@classmethod

|

||||

def run_google(cls, query, max_results=8):

|

||||

# results = ddg(query, max_results=max_results)

|

||||

results = google_official_search(query, num_results=max_results)

|

||||

logger.info(results)

|

||||

return results

|

||||

Args:

|

||||

engine: The search engine type. Defaults to the search engine specified in the config.

|

||||

run_func: The function to run the search. Defaults to None.

|

||||

|

||||

async def run(self, query: str, max_results=8):

|

||||

if self.engine == SearchEngineType.SERPAPI_GOOGLE:

|

||||

api = SerpAPIWrapper()

|

||||

rsp = await api.run(query)

|

||||

elif self.engine == SearchEngineType.DIRECT_GOOGLE:

|

||||

rsp = SearchEngine.run_google(query, max_results)

|

||||

elif self.engine == SearchEngineType.SERPER_GOOGLE:

|

||||

api = SerperWrapper()

|

||||

rsp = await api.run(query)

|

||||

elif self.engine == SearchEngineType.CUSTOM_ENGINE:

|

||||

rsp = self.run_func(query)

|

||||

Attributes:

|

||||

run_func: The function to run the search.

|

||||

engine: The search engine type.

|

||||

"""

|

||||

def __init__(

|

||||

self,

|

||||

engine: SearchEngineType | None = None,

|

||||

run_func: Callable[[str, int, bool], Coroutine[None, None, str | list[str]]] = None,

|

||||

):

|

||||

engine = engine or CONFIG.search_engine

|

||||

if engine == SearchEngineType.SERPAPI_GOOGLE:

|

||||

module = "metagpt.tools.search_engine_serpapi"

|

||||

run_func = importlib.import_module(module).SerpAPIWrapper().run

|

||||

elif engine == SearchEngineType.SERPER_GOOGLE:

|

||||

module = "metagpt.tools.search_engine_serper"

|

||||

run_func = importlib.import_module(module).SerperWrapper().run

|

||||

elif engine == SearchEngineType.DIRECT_GOOGLE:

|

||||

module = "metagpt.tools.search_engine_googleapi"

|

||||

run_func = importlib.import_module(module).GoogleAPIWrapper().run

|

||||

elif engine == SearchEngineType.DUCK_DUCK_GO:

|

||||

module = "metagpt.tools.search_engine_ddg"

|

||||

run_func = importlib.import_module(module).DDGAPIWrapper().run

|

||||

elif engine == SearchEngineType.CUSTOM_ENGINE:

|

||||

pass # run_func = run_func

|

||||

else:

|

||||

raise NotImplementedError

|

||||

return rsp

|

||||

self.engine = engine

|

||||

self.run_func = run_func

|

||||

|

||||

@overload

|

||||

def run(

|

||||

self,

|

||||

query: str,

|

||||

max_results: int = 8,

|

||||

as_string: Literal[True] = True,

|

||||

) -> str:

|

||||

...

|

||||

|

||||

def google_official_search(query: str, num_results: int = 8, focus=['snippet', 'link', 'title']) -> dict | list[dict]:

|

||||

"""Return the results of a Google search using the official Google API

|

||||

@overload

|

||||

def run(

|

||||

self,

|

||||

query: str,

|

||||

max_results: int = 8,

|

||||

as_string: Literal[False] = False,

|

||||

) -> list[dict[str, str]]:

|

||||

...

|

||||

|

||||

Args:

|

||||

query (str): The search query.

|

||||

num_results (int): The number of results to return.

|

||||

async def run(self, query: str, max_results: int = 8, as_string: bool = True) -> str | list[dict[str, str]]:

|

||||

"""Run a search query.

|

||||

|

||||

Returns:

|

||||

str: The results of the search.

|

||||

"""

|

||||

Args:

|

||||

query: The search query.

|

||||

max_results: The maximum number of results to return. Defaults to 8.

|

||||

as_string: Whether to return the results as a string or a list of dictionaries. Defaults to True.

|

||||

|

||||

from googleapiclient.discovery import build

|

||||

from googleapiclient.errors import HttpError

|

||||

|

||||

try:

|

||||

api_key = config.google_api_key

|

||||

custom_search_engine_id = config.google_cse_id

|

||||

|

||||

with build("customsearch", "v1", developerKey=api_key) as service:

|

||||

|

||||

result = (

|

||||

service.cse()

|

||||

.list(q=query, cx=custom_search_engine_id, num=num_results)

|

||||

.execute()

|

||||

)

|

||||

logger.info(result)

|

||||

# Extract the search result items from the response

|

||||

search_results = result.get("items", [])

|

||||

|

||||

# Create a list of only the URLs from the search results

|

||||

search_results_details = [{i: j for i, j in item_dict.items() if i in focus} for item_dict in search_results]

|

||||

|

||||

except HttpError as e:

|

||||

# Handle errors in the API call

|

||||

error_details = json.loads(e.content.decode())

|

||||

|

||||

# Check if the error is related to an invalid or missing API key

|

||||

if error_details.get("error", {}).get(

|

||||

"code"

|

||||

) == 403 and "invalid API key" in error_details.get("error", {}).get(

|

||||

"message", ""

|

||||

):

|

||||

return "Error: The provided Google API key is invalid or missing."

|

||||

else:

|

||||

return f"Error: {e}"

|

||||

# google_result can be a list or a string depending on the search results

|

||||

|

||||

# Return the list of search result URLs

|

||||

return search_results_details

|

||||

|

||||

|

||||

def safe_google_results(results: str | list) -> str:

|

||||

"""

|

||||

Return the results of a google search in a safe format.

|

||||

|

||||

Args:

|

||||

results (str | list): The search results.

|

||||

|

||||

Returns:

|

||||

str: The results of the search.

|

||||

"""

|

||||

if isinstance(results, list):

|

||||

safe_message = json.dumps(

|

||||

# FIXME: # .encode("utf-8", "ignore") 这里去掉了,但是AutoGPT里有,很奇怪

|

||||

[result for result in results]

|

||||

)

|

||||

else:

|

||||

safe_message = results.encode("utf-8", "ignore").decode("utf-8")

|

||||

return safe_message

|

||||

|

||||

|

||||

if __name__ == '__main__':

|

||||

SearchEngine.run(query='wtf')

|

||||

Returns:

|

||||

The search results as a string or a list of dictionaries.

|

||||

"""

|

||||

return await self.run_func(query, max_results=max_results, as_string=as_string)

|

||||

|

|

|

|||

107

metagpt/tools/search_engine_ddg.py

Normal file

107

metagpt/tools/search_engine_ddg.py

Normal file

|

|

@ -0,0 +1,107 @@

|

|||

#!/usr/bin/env python

|

||||

|

||||

from __future__ import annotations

|

||||

|

||||

import asyncio

|

||||

import json

|

||||

from concurrent import futures

|

||||

from typing import Literal, overload

|

||||

|

||||

from duckduckgo_search import DDGS

|

||||

from googleapiclient.errors import HttpError

|

||||

|

||||

from metagpt.config import CONFIG

|

||||

from metagpt.logs import logger

|

||||

|

||||

|

||||

class DDGAPIWrapper:

|

||||

"""Wrapper around duckduckgo_search API.

|

||||

|

||||

To use this module, you should have the `duckduckgo_search` Python package installed.

|

||||

"""

|

||||

def __init__(

|

||||

self,

|

||||

*,

|

||||

loop: asyncio.AbstractEventLoop | None = None,

|

||||

executor: futures.Executor | None = None,

|

||||

):

|

||||

kwargs = {}

|

||||

if CONFIG.global_proxy:

|

||||

kwargs["proxies"] = CONFIG.global_proxy

|

||||

self.loop = loop

|

||||

self.executor = executor

|

||||

self.ddgs = DDGS(**kwargs)

|

||||

|

||||

@overload

|

||||

def run(

|

||||

self,

|

||||

query: str,

|

||||

max_results: int = 8,

|

||||

as_string: Literal[True] = True,

|

||||

focus: list[str] | None = None,

|

||||

) -> str:

|

||||

...

|

||||

|

||||

@overload

|

||||

def run(

|

||||

self,

|

||||

query: str,

|

||||

max_results: int = 8,

|

||||

as_string: Literal[False] = False,

|

||||

focus: list[str] | None = None,

|

||||

) -> list[dict[str, str]]:

|

||||

...

|

||||

|

||||

async def run(

|

||||

self,

|

||||

query: str,

|

||||

max_results: int = 8,

|

||||

as_string: bool = True,

|

||||

) -> str | list[dict]:

|

||||

"""Return the results of a Google search using the official Google API

|

||||

|

||||

Args:

|

||||

query: The search query.

|

||||

max_results: The number of results to return.

|

||||

as_string: A boolean flag to determine the return type of the results. If True, the function will

|

||||

return a formatted string with the search results. If False, it will return a list of dictionaries

|

||||

containing detailed information about each search result.

|

||||

|

||||

Returns:

|

||||

The results of the search.

|

||||

"""

|

||||

loop = self.loop or asyncio.get_event_loop()

|

||||

future = loop.run_in_executor(

|

||||

self.executor,

|

||||

self._search_from_ddgs,

|

||||

query,

|

||||

max_results,

|

||||

)

|

||||

try:

|

||||

search_results = await future

|

||||

# Extract the search result items from the response

|

||||

|

||||

except HttpError as e:

|

||||

# Handle errors in the API call

|

||||

logger.exception(f"fail to search {query} for {e}")

|

||||

search_results = []

|

||||

|

||||

# Return the list of search result URLs

|

||||

if as_string:

|

||||

return json.dumps(search_results, ensure_ascii=False)

|

||||

return search_results

|

||||

|

||||

def _search_from_ddgs(self, query: str, max_results: int):

|

||||

return [

|

||||

{

|

||||

"link": i["href"],

|

||||

"snippet": i["body"],

|

||||

"title": i["title"]

|

||||

} for (_, i) in zip(range(max_results), self.ddgs.text(query))

|

||||

]

|

||||

|

||||

|

||||

if __name__ == "__main__":

|

||||

import fire

|

||||

|

||||

fire.Fire(DDGAPIWrapper().run)

|

||||

117

metagpt/tools/search_engine_googleapi.py

Normal file

117

metagpt/tools/search_engine_googleapi.py

Normal file

|

|

@ -0,0 +1,117 @@

|

|||

#!/usr/bin/env python

|

||||

# -*- coding: utf-8 -*-

|

||||

from __future__ import annotations

|

||||

|

||||

import asyncio

|

||||

import json

|

||||

from concurrent import futures

|

||||

from urllib.parse import urlparse

|

||||

|

||||

import httplib2

|

||||

from googleapiclient.discovery import build

|

||||

from googleapiclient.errors import HttpError

|

||||

|

||||

from metagpt.config import CONFIG

|

||||

from metagpt.logs import logger

|

||||

|

||||

|

||||

class GoogleAPIWrapper:

|

||||

"""Wrapper around GoogleAPI.

|

||||

|

||||

To use this module, you should have the `google-api-python-client` Python package installed

|

||||

and set property values for the configurations `GOOGLE_API_KEY` and `GOOGLE_CSE_ID`. See

|

||||

https://programmablesearchengine.google.com/controlpanel/all.

|

||||

"""

|

||||

def __init__(

|

||||

self,

|

||||

*,

|

||||

loop: asyncio.AbstractEventLoop | None = None,

|

||||

executor: futures.Executor | None = None,

|

||||

):

|

||||

build_kwargs = {"developerKey": CONFIG.google_api_key}

|

||||

if CONFIG.global_proxy:

|

||||

parse_result = urlparse(CONFIG.global_proxy)

|

||||

proxy_type = parse_result.scheme

|

||||

if proxy_type == "https":

|

||||

proxy_type = "http"

|

||||

build_kwargs["http"] = httplib2.Http(

|

||||

proxy_info=httplib2.ProxyInfo(

|

||||

getattr(httplib2.socks, f"PROXY_TYPE_{proxy_type.upper()}"),

|

||||

parse_result.hostname,

|

||||

parse_result.port,

|

||||

),

|

||||

)

|

||||

service = build("customsearch", "v1", **build_kwargs)

|

||||

self.google_api_client = service.cse()

|

||||

self.custom_search_engine_id = CONFIG.google_cse_id

|

||||

self.loop = loop

|

||||

self.executor = executor

|

||||

|

||||

async def run(

|

||||

self,

|

||||

query: str,

|

||||

max_results: int = 8,

|

||||

as_string: bool = True,

|

||||

focus: list[str] | None = None,

|

||||

) -> str | list[dict]:

|

||||

"""Return the results of a Google search using the official Google API.

|

||||

|

||||

Args:

|

||||

query: The search query.

|

||||

max_results: The number of results to return.

|

||||

as_string: A boolean flag to determine the return type of the results. If True, the function will

|

||||

return a formatted string with the search results. If False, it will return a list of dictionaries

|

||||

containing detailed information about each search result.

|

||||

focus: Specific information to be focused on from each search result.

|

||||

|

||||

Returns:

|

||||

The results of the search.

|

||||

"""

|

||||

loop = self.loop or asyncio.get_event_loop()

|

||||

future = loop.run_in_executor(

|

||||

self.executor,

|

||||

self.google_api_client.list(

|

||||

q=query,

|

||||

num=max_results,

|

||||

cx=self.custom_search_engine_id

|

||||

).execute

|

||||

)

|

||||

try:

|

||||

result = await future

|

||||

# Extract the search result items from the response

|

||||

search_results = result.get("items", [])

|

||||

|

||||

except HttpError as e:

|

||||

# Handle errors in the API call

|

||||

logger.exception(f"fail to search {query} for {e}")

|

||||

search_results = []

|

||||

|

||||

focus = focus or ["snippet", "link", "title"]

|

||||

details = [{i: j for i, j in item_dict.items() if i in focus} for item_dict in search_results]

|

||||

# Return the list of search result URLs

|

||||